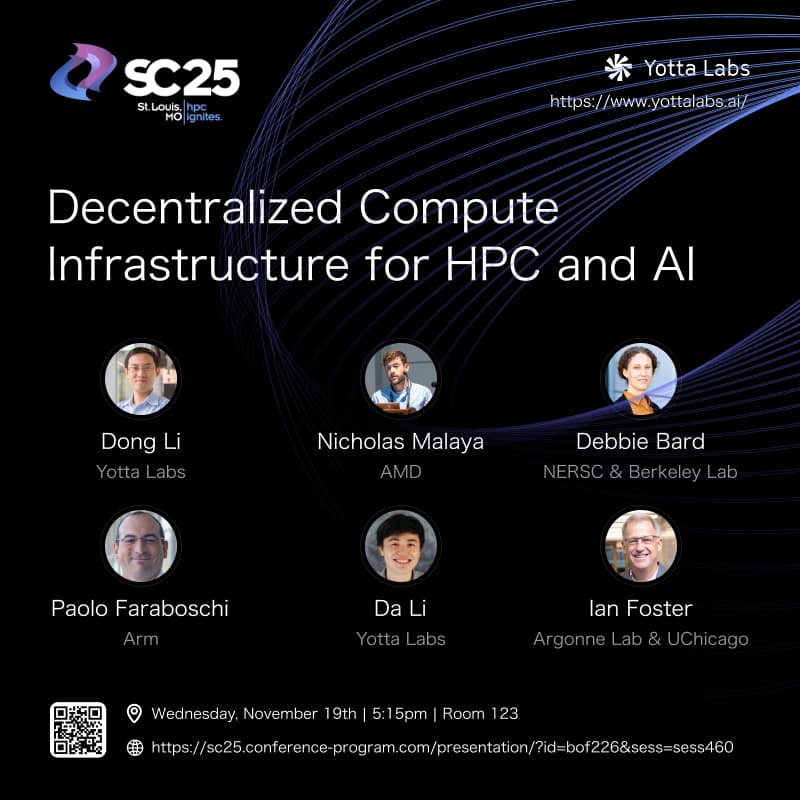

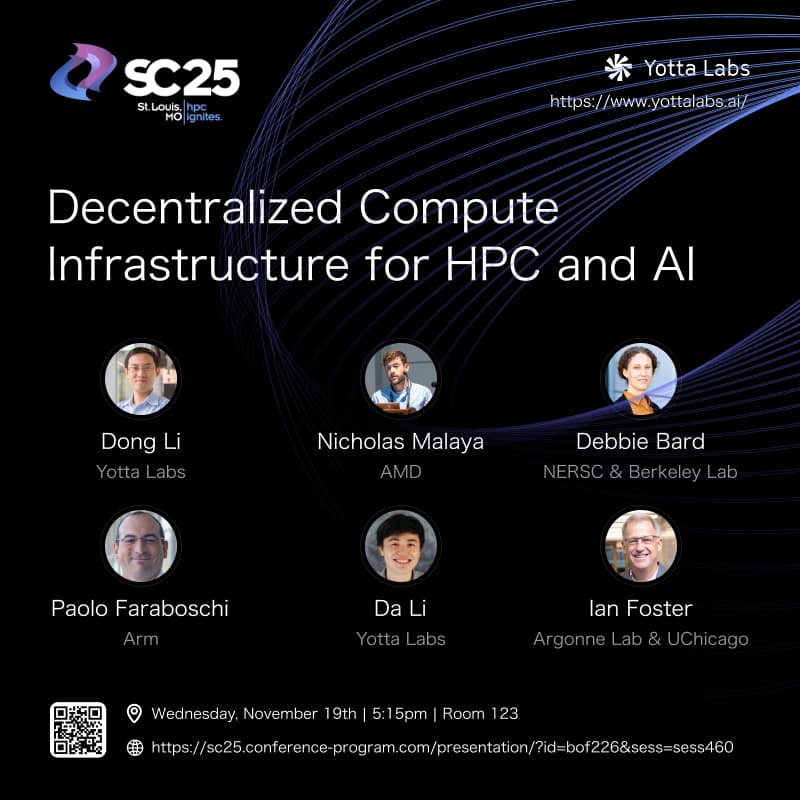

SC25 - BoF: Decentralized Compute Infrastructure for HPC and AI

SuperComputing 2025 is less than a month away and we're excited to host a Birds-of-a-Feather panel session on “Decentralized Compute Infrastructure for HPC and AI".

As high-performance computing (HPC) and artificial intelligence (AI) workloads continue to expand in scale, centralized data center models are running into critical bottlenecks in sustainability, scalability, and resilience. This BoF will convene researchers, industry leaders, and practitioners to explore decentralized architectures that leverage edge computing, federated orchestration, modular microdata centers, and decentralized governance models.

Goals of the Session

Drive a dynamic discussion on architectural shifts needed to support decentralized computing for HPC and AI.

Exchange experiences and best practices from research labs, academic institutions, industry practitioners, and cloud providers.

Identify shared challenges—including data sovereignty, security, and workload scheduling—while brainstorming collaborative solutions.

Build momentum toward standardization and interoperability frameworks that enable scalable, efficient, and secure distributed infrastructures.

Topics of Exploration

The panel will explore a broad spectrum of issues central to the future of decentralized computing, including:

Architectural Models – Comparing centralized vs. decentralized HPC/AI architectures, deployment of edge clusters, and federated supercomputing networks.

Intelligent Resource Management – AI-driven orchestration, decentralized workload balancing, and data-locality optimizations.

Security & Governance – Identity federation, zero-trust models, and secure multi-institution collaboration.

Interoperability & Standards – Open-source frameworks, APIs, and benchmarking for reproducibility across environments.

Sustainability – Renewable energy integration, carbon-aware workload placement, and energy-efficient regional HPC design.

Real-World Use Cases – From scientific instrumentation at the edge to climate modeling and global-scale AI training.

Collaboration & Adoption – Funding models, institutional case studies, and pathways to long-term operational sustainability.

Speakers

Dr. Dong Li - Associate Professor at UC Merced, Co-founder & Chief Scientist of Yotta Labs

Dr. Debbie Bard - Department Head for Science Engagement and Workflows at NERSC

Dr. Ian Foster - Professor & Scientist at Argonne National Lab and U of Chicago

Dr. Nicholas Malaya - Fellow, High Performance Computing, AMD

Dr. Paolo Faraboschi - Vice President, Performance Engineering, Arm

Dr. Da Li - Co-founder & CEO of Yotta Labs

Why This Matters for the HPC Community

This BoF directly aligns with the mission of SC25: advancing innovation in HPC, networking, storage, and analysis. As data-intensive AI and HPC workloads strain the limits of centralized infrastructures, decentralized data centers provide a compelling alternative—enhancing resilience, geographic accessibility, and democratization of compute resources.

Attendees will gain fresh insights into the next generation of compute infrastructure while contributing to a collective dialogue that could shape future standards, open-source frameworks, and community-driven collaborations.

❗️Important: A valid SC25 ticket is required for in-person attendance. Registered participants will receive the panel details and summary by email following the event.

About Yotta Labs (yottalabs.ai)

Yotta Labs is building the efficient and interoperable AI infrastructure at planetary scale. At its core, Yotta orchestration platform acts as the AI-native Operating System, which enables AI interoperability by bridging heterogeneous GPUs, specialized accelerators, and distributed data centers so customers can run AI workloads at anywhere, on any provider, and scale seamlessly.