Bliss Reading Group - May 11

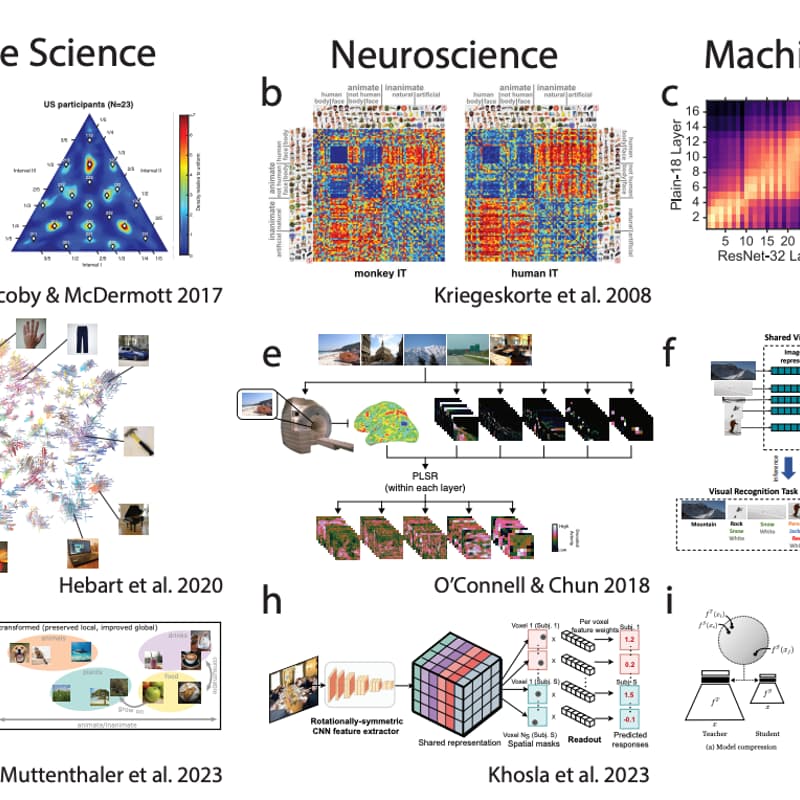

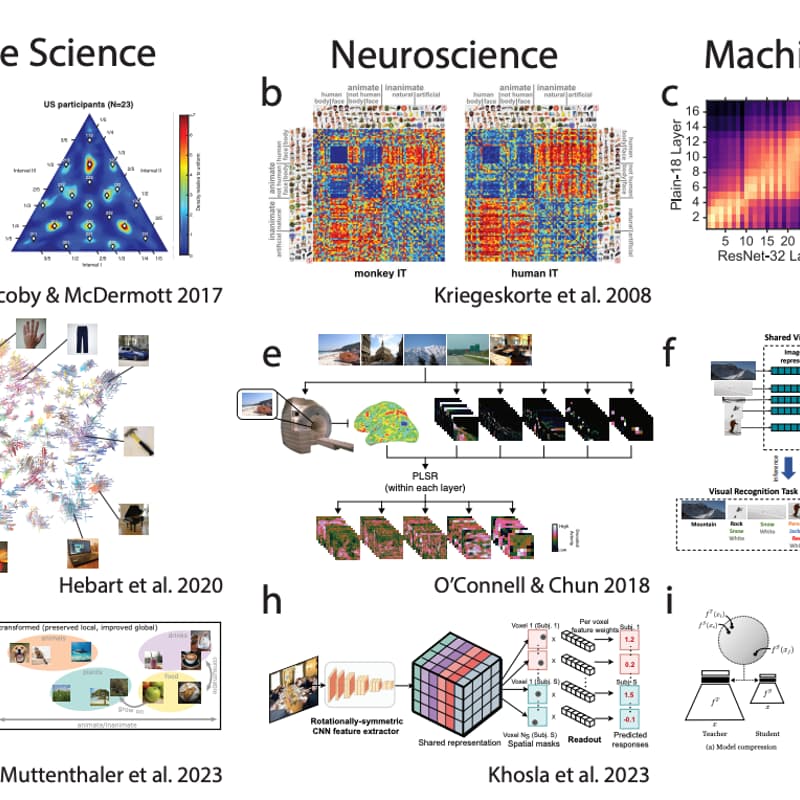

For our third session, we shift gears. Where the first two papers dealt with alignment in the AI safety sense, this week we turn to a different meaning of the word: representational alignment -whether different information processing systems form similar internal representations of the world.

Our paper is Getting aligned on representational alignment (Sucholutsky et al., 2023), hosted by Tom Neuhäuser.

This is a broad Perspective paper with over 30 authors spanning cognitive science, neuroscience, and machine learning. It asks: how do we measure whether a neural network and a human brain (or two neural networks, or two brains) represent the same stimulus in similar ways? Do similar representations lead to similar behaviour? And can we modify one system's representations to better match another's?

This paper opens up rich territory for discussion: What should we actually want when we say we want AI representations to be "aligned" with human ones? Is representational similarity even the right goal, or is behavioural equivalence enough? And how does this notion of alignment relate to the safety-oriented alignment we explored in the first two sessions?

Join us for a lively and interesting discussion!