Bliss Reading Group - June 1

Continuing our world models track with Leo Pinetzki, this session takes a big step from MuZero's task-specific learned models toward something more ambitious: world models as generative engines.

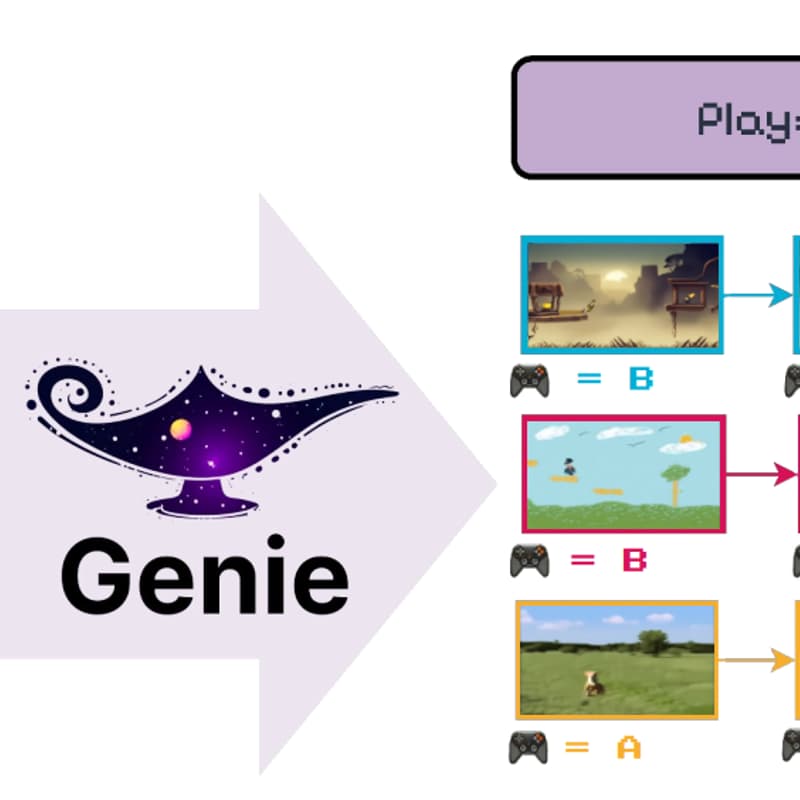

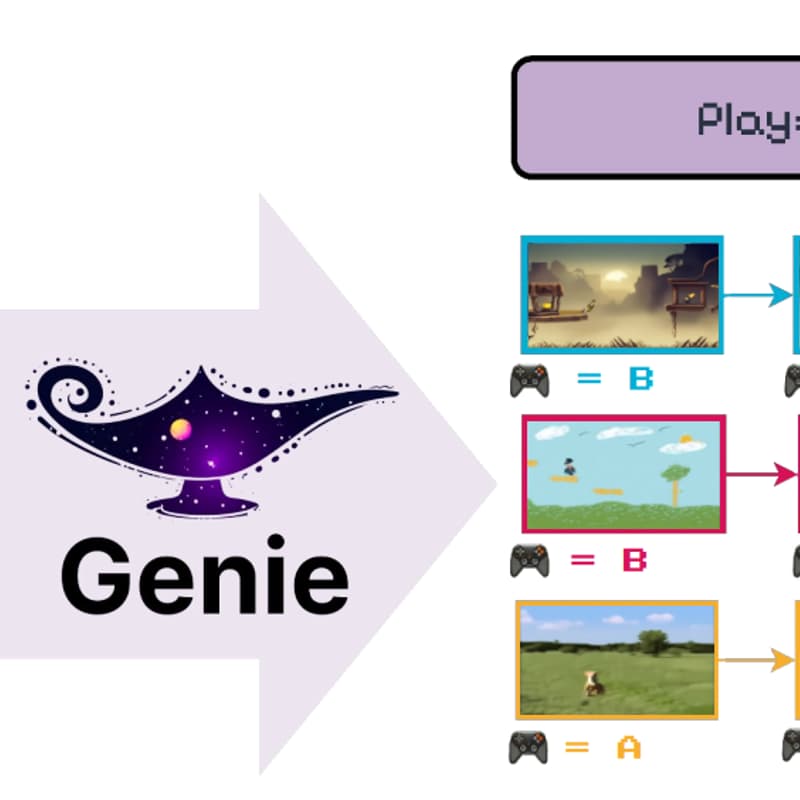

Our paper is Genie: Generative Interactive Environments (Bruce et al., 2024).

Where MuZero learned an internal model to plan within a single game, Genie learns to generate entire interactive environments from scratch. Trained on over 200,000 hours of unlabelled Internet videos of 2D platformer games, this 11B-parameter model can take a text prompt, a photo, or even a sketch and produce a playable virtual world — frame by frame, responding to user actions.

This is a very different flavour of world model from MuZero, and the contrast is productive: Is a model that generates plausible-looking next frames really "understanding" dynamics, or just doing very good video prediction? What's gained and lost when you move from compact latent models to full pixel-level generation? And how far is this approach from being useful outside of 2D games?

Join us for a lively and interesting discussion!