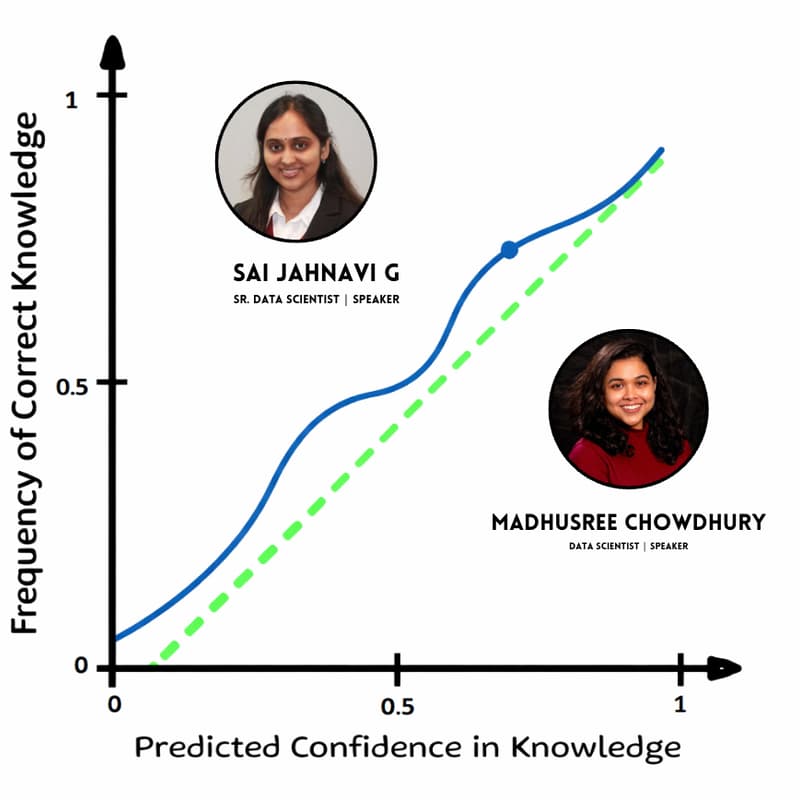

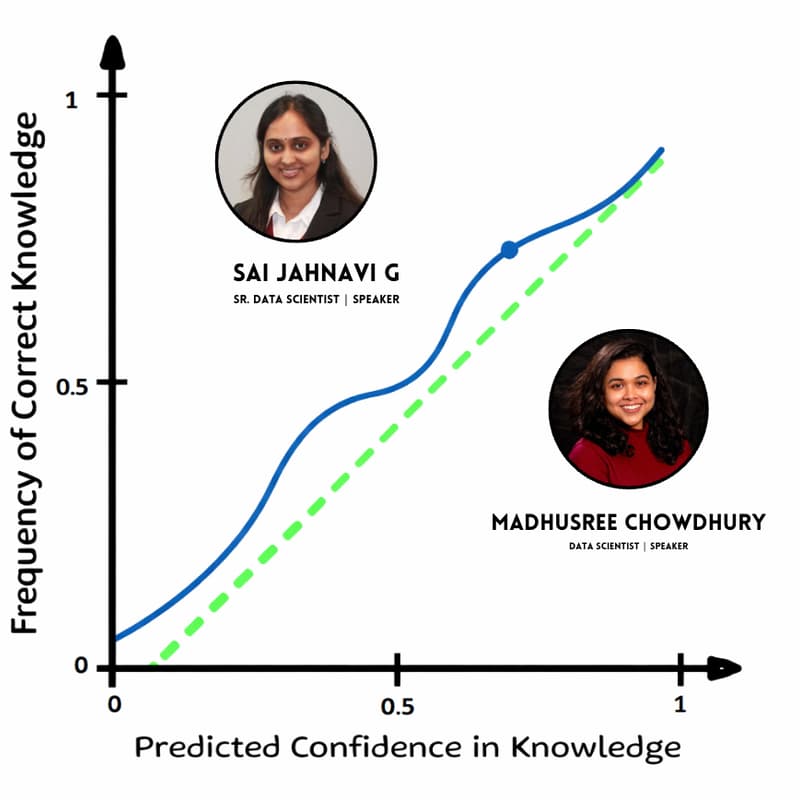

Calibration Plots — Making Model Confidence Trustworthy

Model accuracy tells you how often a model is right but calibration tells you whether its confidence can be trusted.

In this short, practical webinar, we’ll explain how to interpret model confidence using calibration plots, real-world case studies, and simple fixes for common calibration issues.

The session focuses on intuition and real-world relevance rather than heavy math, helping you understand when models are confidently wrong, how to detect it, and what to do next.

Who Should Attend

This webinar is ideal for:

Data scientists and ML engineers working with probabilistic models

Aspiring data scientists or anyone breaking into data science

Anyone who has ever asked, “Can I trust this probability?”

No prior experience with calibration is required, familiarity with basic machine-learning concepts is sufficient.