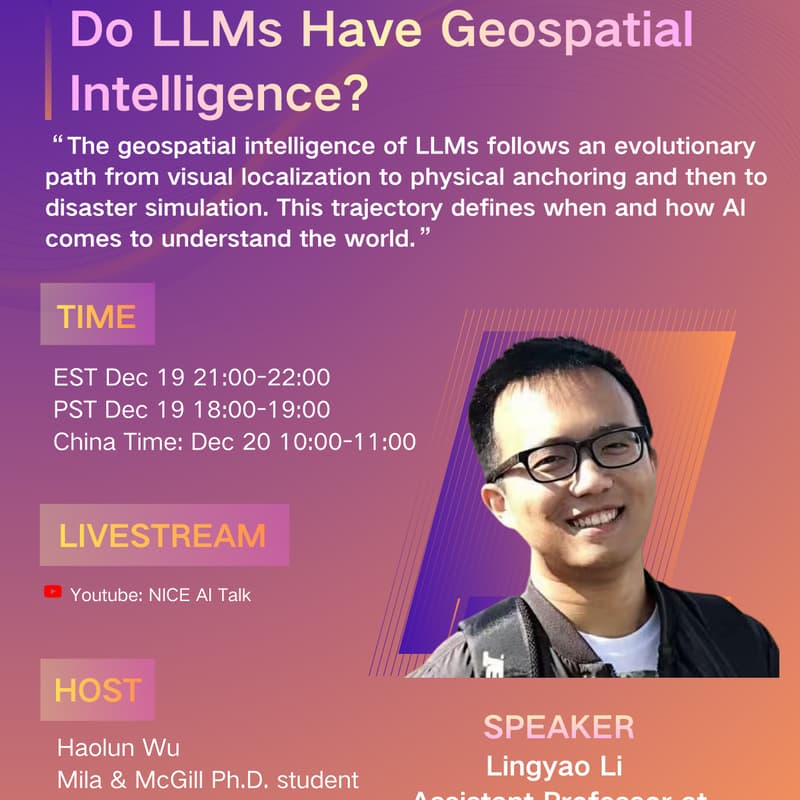

Do LLMs Have Geospatial Intelligence? Exploring Their Abilities in Localization, Hazard Reasoning, and Event Simulation

Talk Abstract

Do large language models (LLMs) possess geospatial intelligence—the ability to localize places, understand spatial context, and reason about real-world hazards? This talk addresses that question through a unified investigation of how state-of-the-art LLMs interpret visual geography, integrate physical-world data, and simulate human-perceived disaster impacts. First, we benchmark LLMs’ geolocalization capabilities by introducing IMAGEO-Bench, a framework that examines how models localize images by extracting landmarks, architectural cues, and environmental context. This analysis reveals both the spatial reasoning capabilities and systematic geographic biases in LLMs. Moving beyond, we introduce the Geospatial Awareness Layer (GAL), which grounds LLM agents in structured Earth data, including terrain, infrastructure, demographics, and weather, to support hazard reasoning during active wildfires. By converting geospatial signals into task-ready “perception scripts,” these grounded agents generate more stable, evidence-based recommendations for personnel deployment and operational planning in wildfire disasters. Finally, we investigate the potential of LLMs as world models for conducting pre-event simulations in seismic contexts. Our results demonstrate that incorporating geospatial, socioeconomic, building, and street-level imagery can produce estimates of seismic impact that align with USGS “Did You Feel It?” reports. Collectively, these studies trace a progression from visual geolocation to geospatial grounding to human-centered simulation, offering a comprehensive view of how and when LLMs meaningfully connect with the physical world, and where fundamental gaps remain.

Our Speaker

Dr. Lingyao Li is an Assistant Professor in the School of Information at the University of South Florida. Before joining USF, he completed his postdoctoral research at the University of Michigan School of Information and earned his Ph.D. in Civil Engineering from the University of Maryland. His research uses artificial intelligence, especially large language models (LLMs), combined with crowdsourced social media and mobile data to address socio-technical challenges in urban and health informatics. His current research encompasses three main areas. First, his human–AI interaction research investigates the bidirectional impacts between AI models (e.g., chatbots and roleplay tools) and their users, with particular attention to mental health implications. Second, building on his civil and environmental engineering background, he examines the emerging potential of LLMs in urban science and geospatial intelligence, exploring whether and how LLMs can interpret place, reason about spatial context, and support data-driven decision-making for disasters and community resilience. Third, he studies LLM-based multi-agent systems for enabling complex reasoning, collaborative problem-solving, and interactive decision support in healthcare domains such as clinical diagnosis and triage ranking. Together, these research directions reflect a cohesive agenda aimed at understanding and advancing LLMs’ capabilities in human-centered environments while ensuring their responsible, trustworthy, and societally beneficial use.

Our Host

Haolun Wu is a 4th-year PhD candidate at Mila & McGill and a visiting scholar at Stanford. His research interests include trustworthy AI / LLMs, information retrieval, personalization, human-AI alignment, and AI for education. He has interned at Microsoft Research, Google, and DeepMind, and his work has been deployed in the MSR Alexandria knowledge base construction and applied to Google Shopping recommendation platform. He has published in top venues across several areas (e.g., NeurIPS, ICML, ICLR, EMNLP, SIGIR, WWW, CHI, CSCW, TMLR, TKDE) and serves as a reviewer.