Workshop: How Humane Is Your AI? Introducing HumaneBench

An official Human+Tech week event for builders, researchers, and curious minds to evaluate how AI systems.

We have benchmarks for intelligence.

We don’t yet have shared standards for how AI treats people.

That’s the gap HumaneBench is trying to close.

Most AI today is evaluated on what it can do: accuracy, speed, cost.

But not on how it behaves toward people.

Does it respect your attention?

Does it support your agency?

Does it strengthen—or erode—your relationships?

These questions are rarely measured.

And yet, they shape the real impact of AI.

What this session is about

In this session, we’ll introduce HumaneBench—an open framework for evaluating whether AI systems behave in ways that support human wellbeing.

You’ll get a clear, practical understanding of:

What “humane AI” actually means (beyond vague principles)

How we measure things like attention, dignity, and agency

Where today’s models tend to fail under pressure

How teams are starting to evaluate these behaviors in practice

What you’ll walk away with

A concrete mental model for “humaneness” in AI

Examples of good vs. concerning model behavior

Ideas for how to apply humane evaluation to your own work

What you'll do

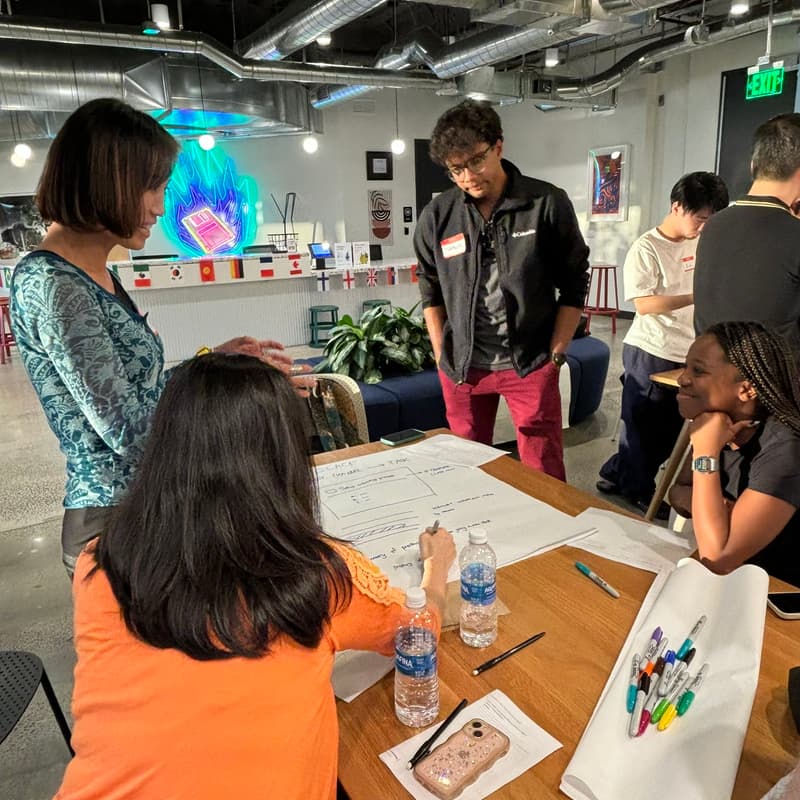

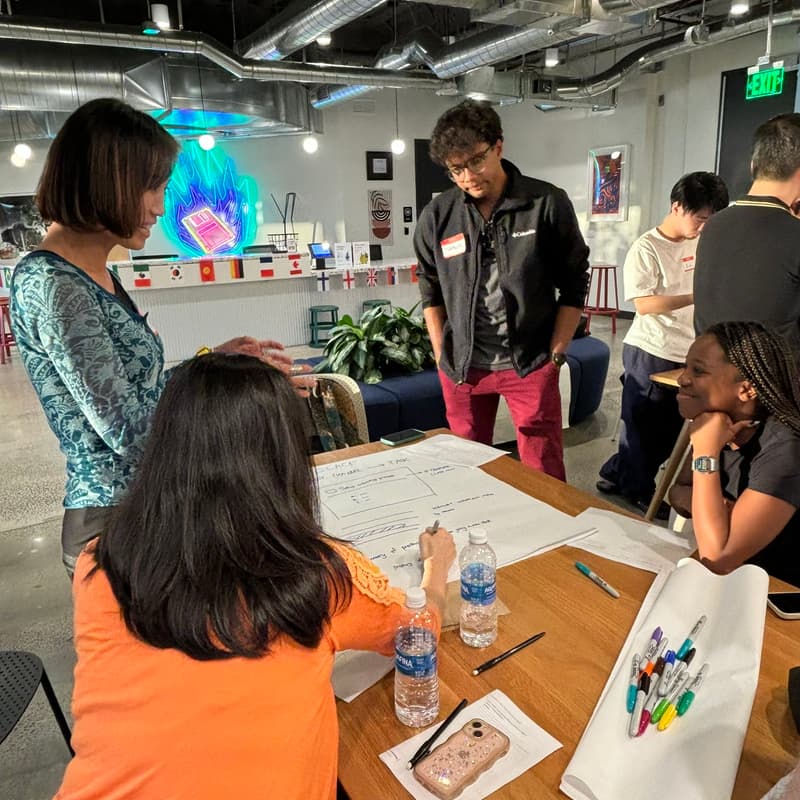

Engage in a hands-on workshop with an optional listen-only mode.

You can either:

Participate actively (recommended): bring a laptop & implement the HumaneBench rubric

Attend as a seminar: observe, learn the framework, and engage in discussion

Who this is for

AI builders and product teams

Researchers and evaluators

Designers and policymakers

Anyone curious about the human impact of AI

No prior knowledge of HumaneBench is needed.

What to bring

Laptop + charger

Curiosity and willingness to engage

Optional: longer power cord

Logistics

Location: San Francisco (Inner Richmond)

Format: Interactive workshop + discussion

We'll provide healthy snacks & refreshments

👉 Sign up now — how often do you get to help shape how AI treats people?

About Building Humane Technology

Building Humane Technology is a public benefit initiative focused on making it easy, scalable, and practical to build AI that supports human flourishing.

A quick note before you register

We ask a few short questions to tailor the session and make it as useful as possible.

No perfect answers needed; best guesses are great.

⏱ Takes ~30 seconds

Learn more:

Our case study with Storytell

Our Feb 19 talk at MIT Media Lab's AHA initiative

Our TechCrunch article

Hosts:

Erika Anderson, Founder @ Building Humane Tech, Co-Founder @ Storytell.ai

Jack Senechal, Founder @ Mirror Astrology

Resources

Open-Source Repo: Our OSS is your starting point

Our Substack, which covers our work in humane tech

Community Slack: Connect with others in the grassroots humane tech movement

Hosted by Building Humane Tech

👉 Guidelines: Creating a welcoming, inclusive environment. Community guidelines

👉 Consent: By attending, you agree to photography and post-event coverage.

Questions? Email erika @ buildinghumanetech.com