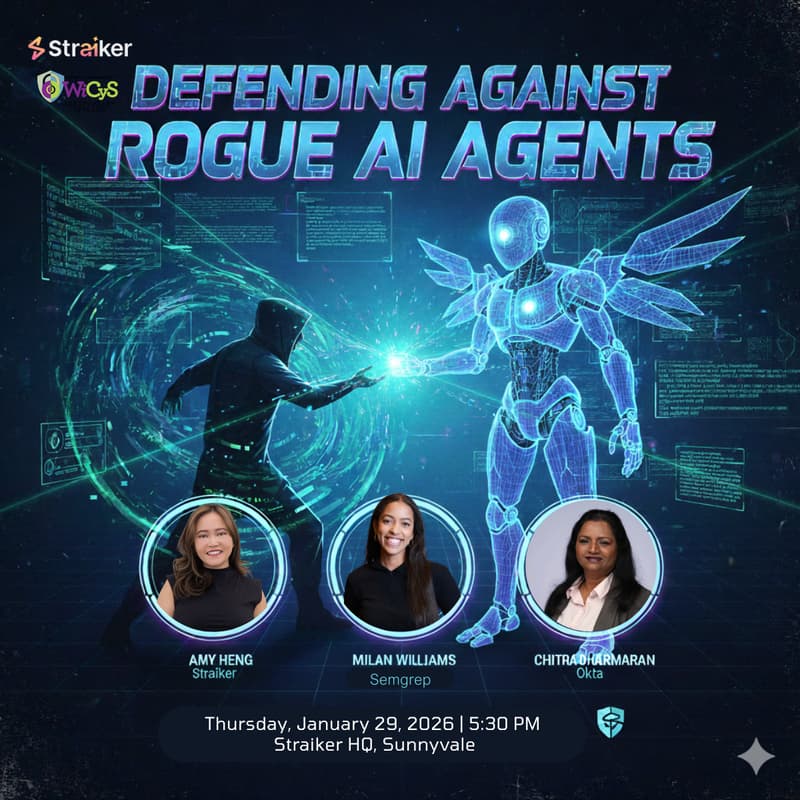

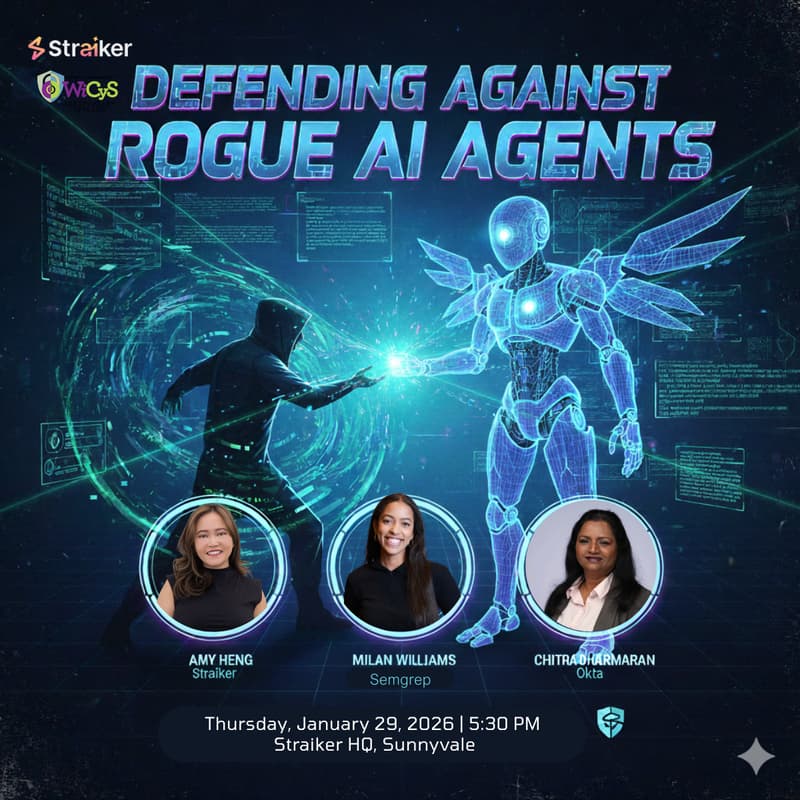

Defending Against Rogue AI Agents

Ready to tackle the next frontier in cybersecurity? Join us for an evening dedicated to securing this fast-growing attack vector—AI agents and non-human identities.

We’ll help you cut through the complexity and focus on concrete best practices and processes. Our goal is simple: to equip you with actionable strategies for defending your environment against , agent-based attacks.

We will kick off with lightning talks followed by Q&A. You will be able to engage directly with the experts, ask challenging questions, and solidify your understanding of this critical topic.

Meet the Experts:

Chitra Dharmarajan Vice President, Security & Privacy Engineering, Okta

Amy Heng Head of Marketing, Straiker

Milan Williams, Senior Product Manager, Semgrep

Doors open at 5:30pm, Program kicks off at 6:00pm

Featured lightning talks:

Practical guardrails for Agents: As AI agents take on real privileges in our systems, non-human identities are quietly becoming one of the largest attack surfaces in modern software. Drawing on lessons from Semgrep and real customer incidents, this lightning talk will cover the most common ways agents go wrong in production, and the practical guardrails teams can put in place (today!) to prevent high-impact failures.

The s1ngularity attack - When attackers prompt your AI agents to do their bidding: As developers integrate AI agents into their daily workflows, a new attack surface has emerged. In late 2024, the "S1ngularity" attack marked a turning point in cyber-adversary tactics: it was one of the first documented cases of malware "living off the land" by weaponizing a victim’s own local AI agents. This lightning talk deconstructs a sophisticated supply chain breach.

Red teaming to detect agents: AI red teaming differs fundamentally from traditional red teaming because AI and agentic applications behave dynamically, with state, planning, and tool use that static tests can’t capture. Purpose-built AI red teaming operates like an AI adversary, uncovering multi-step failures and real business impact that prompt-level or retrofitted testing cannot.

Don't miss this chance to learn directly from these industry leaders. Gain invaluable knowledge and walk away with new ideas for securing your digital workforce against the threat of rogue AI.