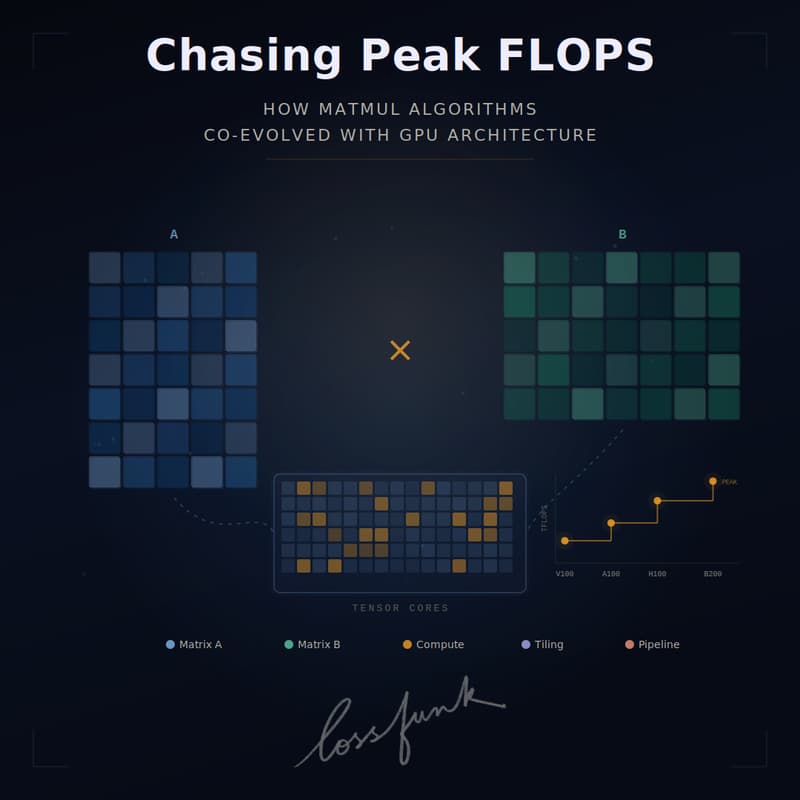

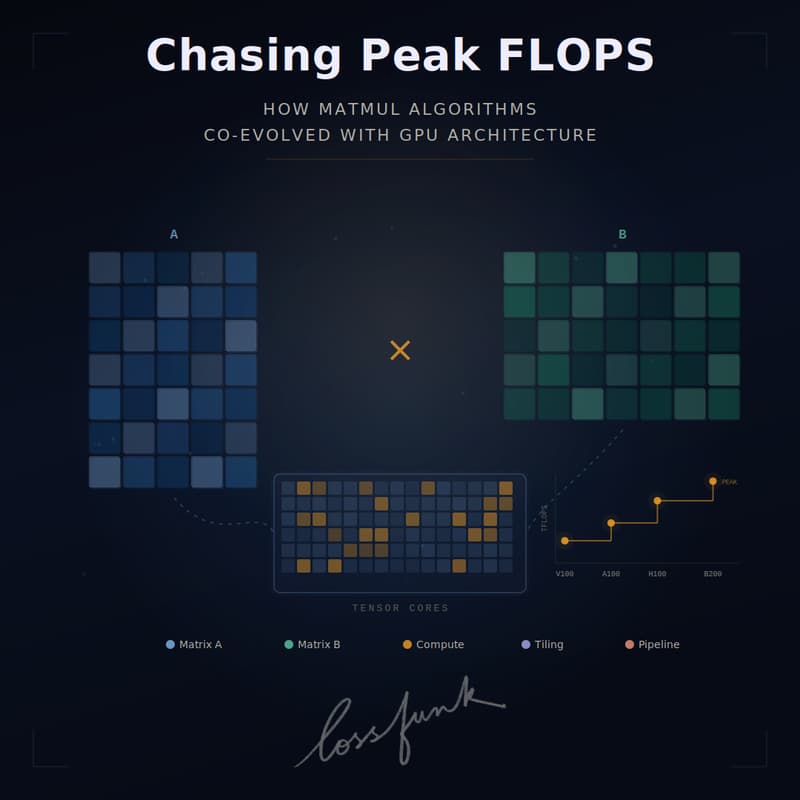

Chasing Peak FLOPS: How MATMUL Algorithms Co-Evolved with GPU Architecture

In this talk, Prashant will explain how matrix multiplication (GEMM) dominates deep learning and co-evolved with GPU architectures from Volta to Blackwell, covering tiling, tensor cores, and advanced techniques such as warp specialisation, persistent kernels, and async pipelines to maximise FLOPS and hardware utilisation.

He'll walk us through the core machinery: how tiling strategies decompose massive matrix problems into cache-friendly chunks, how tensor cores moved matrix math from scalar ALUs into dedicated fixed-function units, and how the programming model evolved to keep these units fed.

About the speaker:

Prashant Kumar is an MTS at Cohere, working on pretraining large models - scaling them across GPUs.

LinkedIn: https://www.linkedin.com/in/prashantkumar25/

To attend online:

Add to calendar: https://shorturl.at/ab9AV

Gmeet link: meet.google.com/wac-vnrb-miv

Pre-read: https://www.nvidia.com/en-us/on-demand/session/gtcspring23-s51413/

Look forward to seeing you!