Train Rich, Test Poor: Distilling Privilege into Language Models

What if your model could learn from information it will never have at test time?

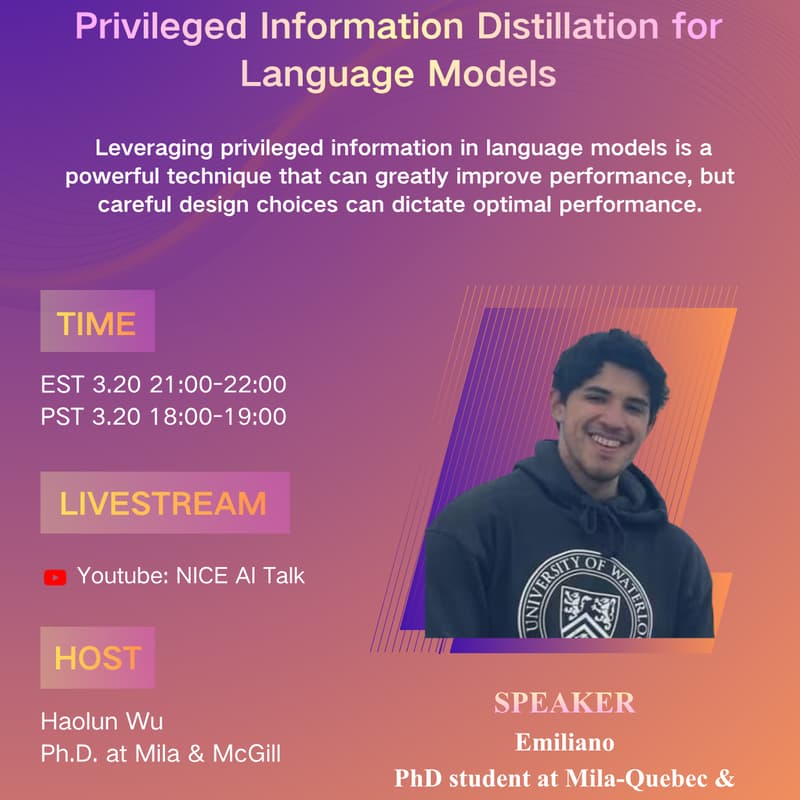

Welcome to NICE TALK 148!

Youtube Livestream: https://www.youtube.com/watch?v=SUb4M73q658

This talk introduces Privileged Information Distillation, a new framework for training language models with rich hints—then deploying them without any of it. By distilling knowledge from a stronger, privileged policy into a practical one, this approach achieves superior performance over standard post-training methods. The result: models that are not just stronger, but more robust and generalizable when it actually matters.

Speaker:

Emiliano is a PhD student at Mila-Quebec and the Université de Montréal, supervised by Laurent Charlin and supported by an NSERC PGS-D award. His research focuses on enhancing the capabilities and alignment of LLM agents in complex, multi-step environments by leveraging their conversational properties at the user level. Previously, he was a visiting researcher at ServiceNow Montreal, working with Massimo Caccia on LLM reasoning for agentic tasks.