AI Safety Papers We Love #1: Multi-Agent Risks from Advanced AI

AI Safety Papers We Love, a biweekly reading group: we pick papers we appreciate about AI safety, broadly construed (technical alignment, governance, interpretability, etc), and talk them through together.

The paper: Multi-Agent Risks from Advanced AI, Hammond et al. (2025) https://arxiv.org/abs/2502.14143

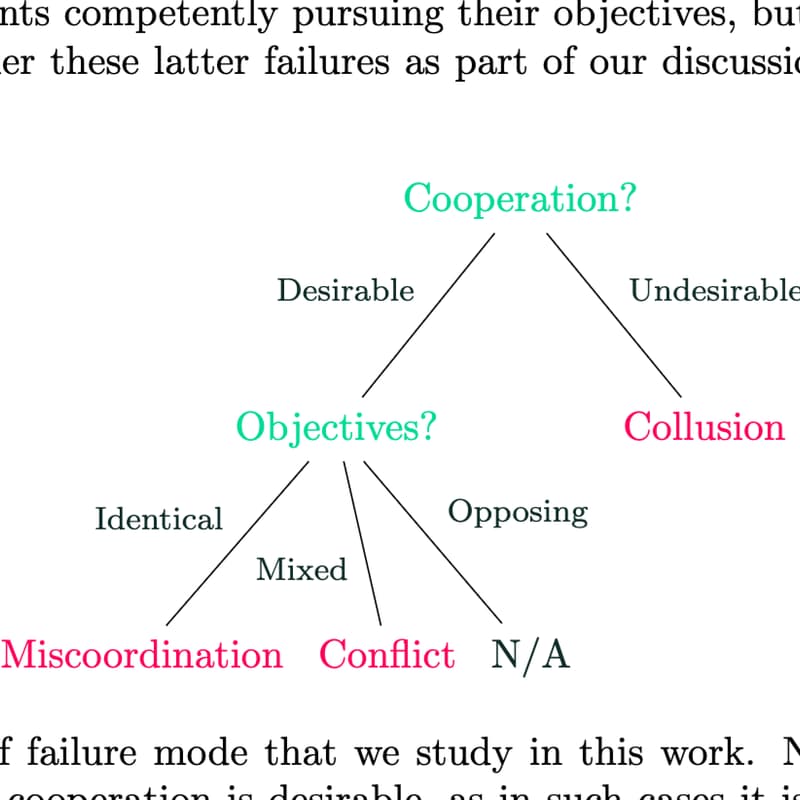

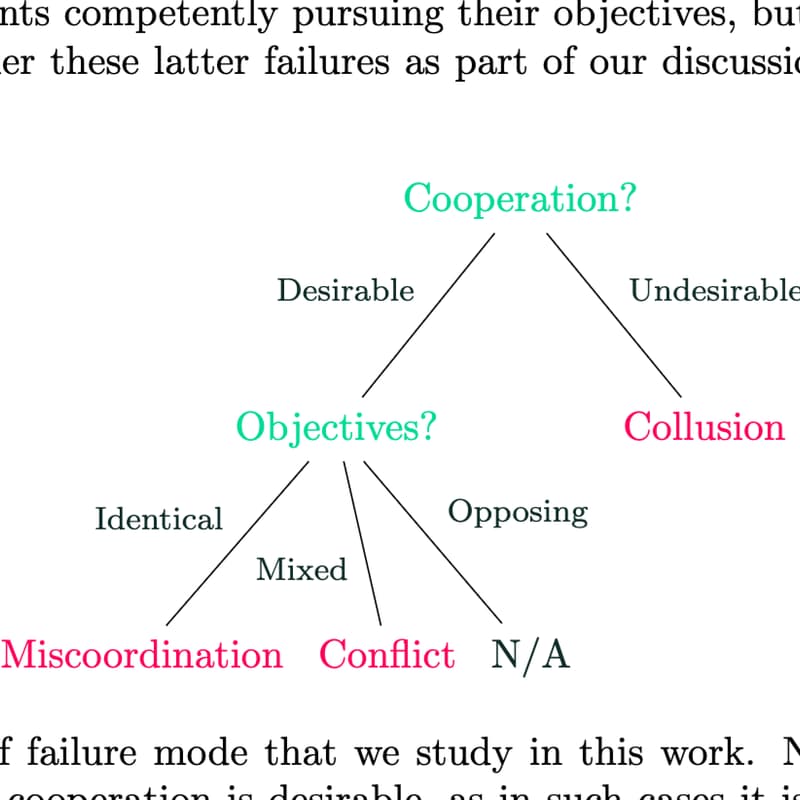

A structured taxonomy of risks arising once AI agents are deployed and interacting at scale. The authors identify three failure modes grounded in agents' incentives, miscoordination, conflict, and collusion, and seven underlying risk factors that drive them: information asymmetries, network effects, selection pressures, destabilising dynamics, commitment problems, emergent agency, and multi-agent security. Each with examples and evidence, and points towarsd mitigations. A useful map of a threat model that single-agent alignment doesn't cover.

Presenter: Orpheus

Format

18:30 - arrive, say hi

18:45 - 25 min presentation of the paper

19:10 - 45 min open discussion

19:55 - pitches for next papers

20:30 - end

Read the paper if you can. Come curious.

Practical

PWYC. RSVP required, ~10 spots.

Where: Ω Labs, 3813 Saint-Denis.

Contact host or call +1 438-476-8403 if you need assistance.

The event is bilingual, but for simplicity this description is in English.