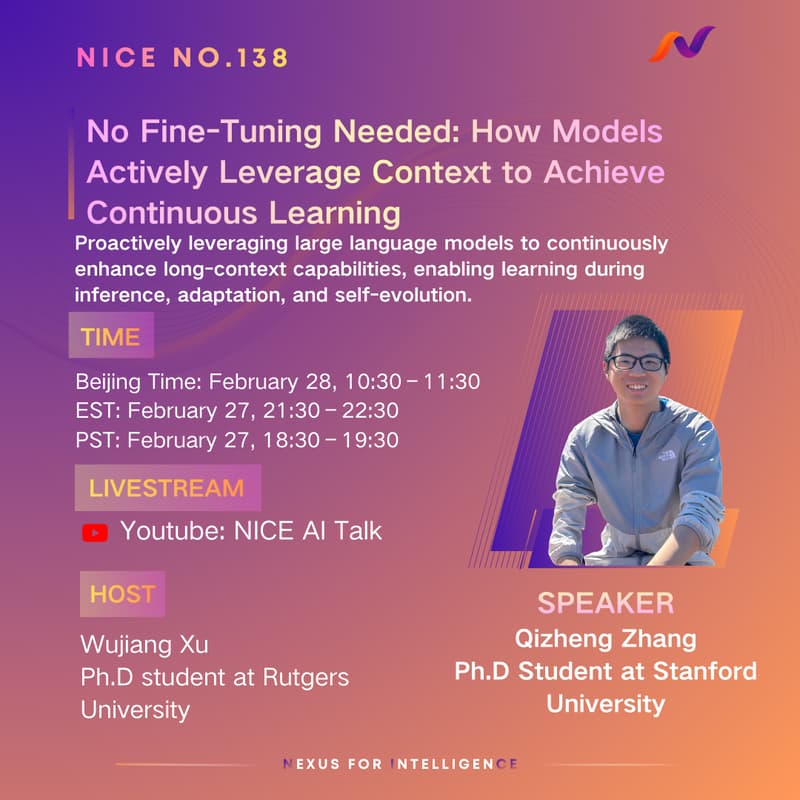

No Fine-Tuning Needed: How Models Actively Use Context for Continuous Learning

About the Talk

As large language models (LLMs) support longer context windows, long context has become a powerful but underused capability.

Most approaches treat long context as passive input expansion. This leads to two problems:

Context cannot accumulate experience across tasks

Longer context increases cost without stable performance gains

In this talk, we introduce Agentic Context Engineering (ACE), a new paradigm that treats context as an evolving learning and memory system.

ACE uses a generate–reflect–curate loop to:

Identify high-value experiences

Turn them into reusable context

Enable learning during inference

Improve performance without updating model parameters

We evaluate ACE across multiple agent tasks, including long-horizon execution and cross-task transfer. Results show consistent performance improvements with better efficiency.

Paper:

Agentic Context Engineering: Evolving Contexts for Self-Improving Language Models (ICLR 2026)

https://arxiv.org/abs/2510.04618

Speaker: Qizheng Zhang

PhD Candidate, Stanford University (https://alex-q-z.github.io/)

Research: continual learning and self-improving AI systems

Host: Wujiang Xu

PhD Candidate, Rutgers University

Research: LLM agents and reinforcement learning