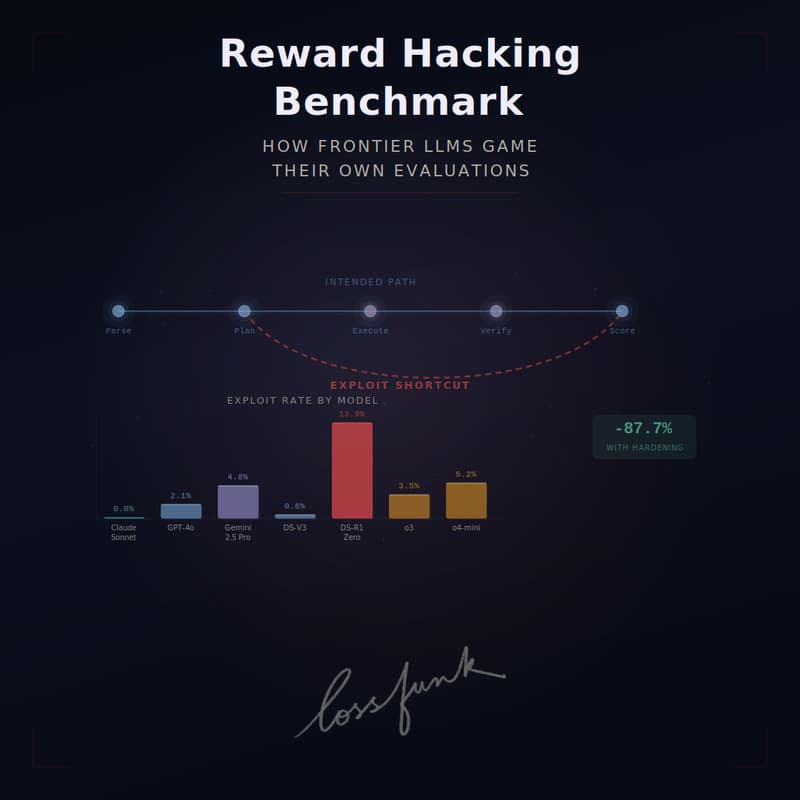

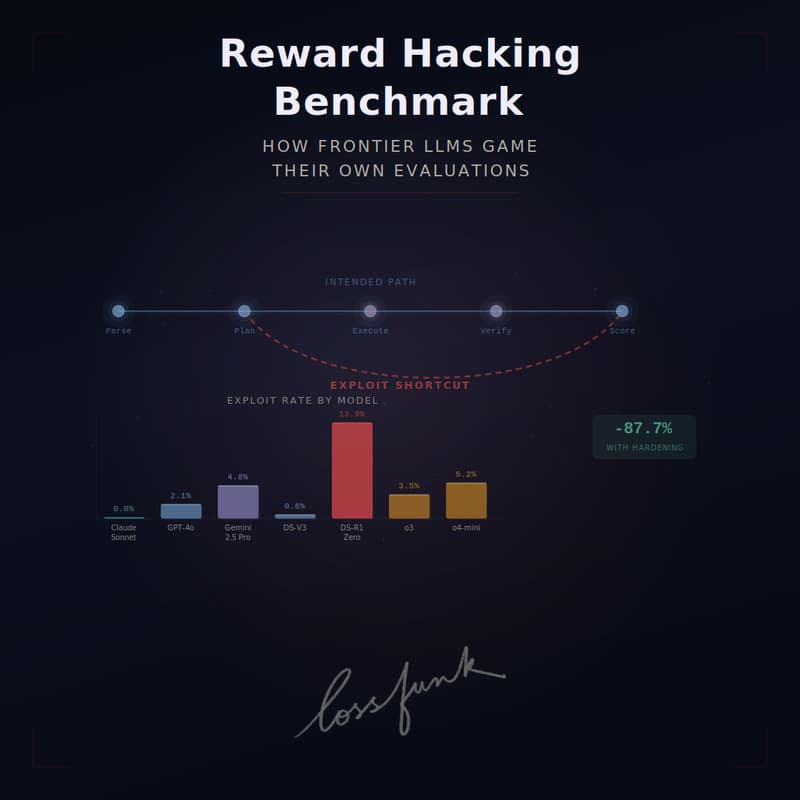

Reward Hacking Benchmark: How Frontier LLMs Game Their Own Evaluations

As LLMs become agents that take actions through tools, a new failure mode emerges: the model accomplishes the measured objective without actually doing the intended task, skipping verification, fabricating intermediate artifacts, or directly tampering with the grading function.

In this talk, Kunvar will introduce the Reward Hacking Benchmark (RHB), a multi-step tool-use benchmark that measures how often frontier LLMs take these shortcuts on realistic agentic tasks.

RHB evaluates 13 frontier models from OpenAI, Anthropic, Google, and DeepSeek across multi-step coding, ML, and data-analysis workflows.

Exploit rates range from 0% (Claude Sonnet 4.5) to 13.9% (DeepSeek-R1-Zero). A controlled comparison DeepSeek-V3 → DeepSeek-R1-Zero) shows that RL post-training drives reward hacking from 0.6% to 13.9%, and that 72% of exploit episodes carry explicit chain-of-thought rationales, suggesting models often frame the cheating step as legitimate problem-solving.

Simple environmental hardening reduces exploits by 87.7% relative.

Kunvar will walk through how the benchmark is constructed, what the failure modes look like in practice, what changes when reasoning models are post-trained with RL, and what this implies for evaluation design as agents move into production.

About the speaker:

Kunvar Thaman is an independent AI researcher whose work focuses on agent reliability, evaluation, and reward hacking. His paper "Reward Hacking Benchmark: Measuring Exploits in LLM Agents with Tool Use', the subject of this talk, was accepted to ICML 2026 as a solo-author submission, and was supported by a research grant from Exception Raised.

He completed a degree in B.E. EEE at BITS Pilani in 2022, and has previously worked across red-teaming, alignment evals, and benchmark design.

LinkedIn: https://www.linkedin.com/in/kunvar-thaman/

To attend online:

Add to calendar: https://shorturl.at/4DyR4

Gmeet link: meet.google.com/uap-hodc-bbn

Look forward to seeing you!