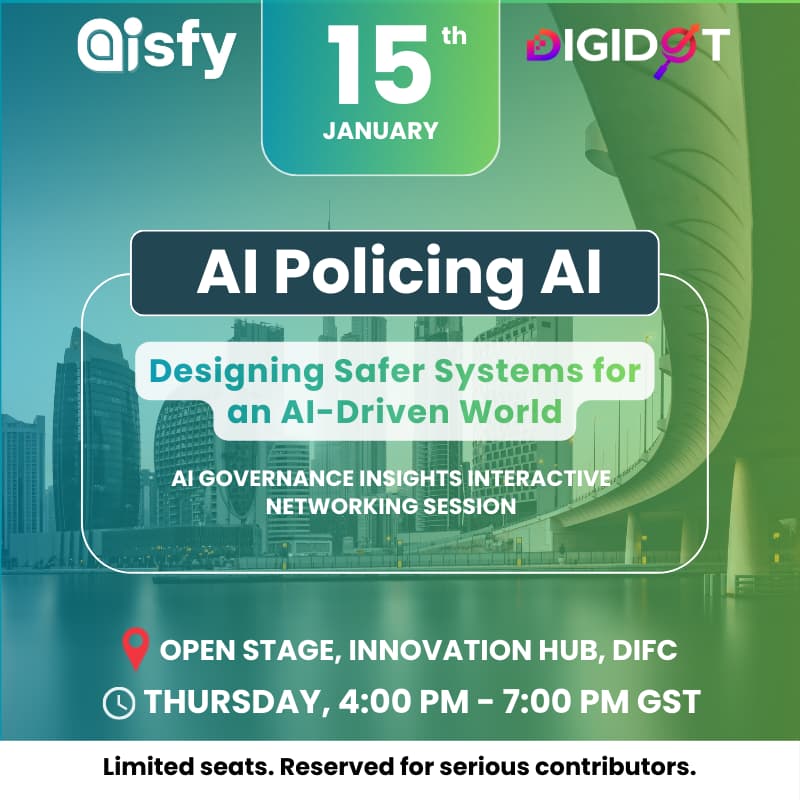

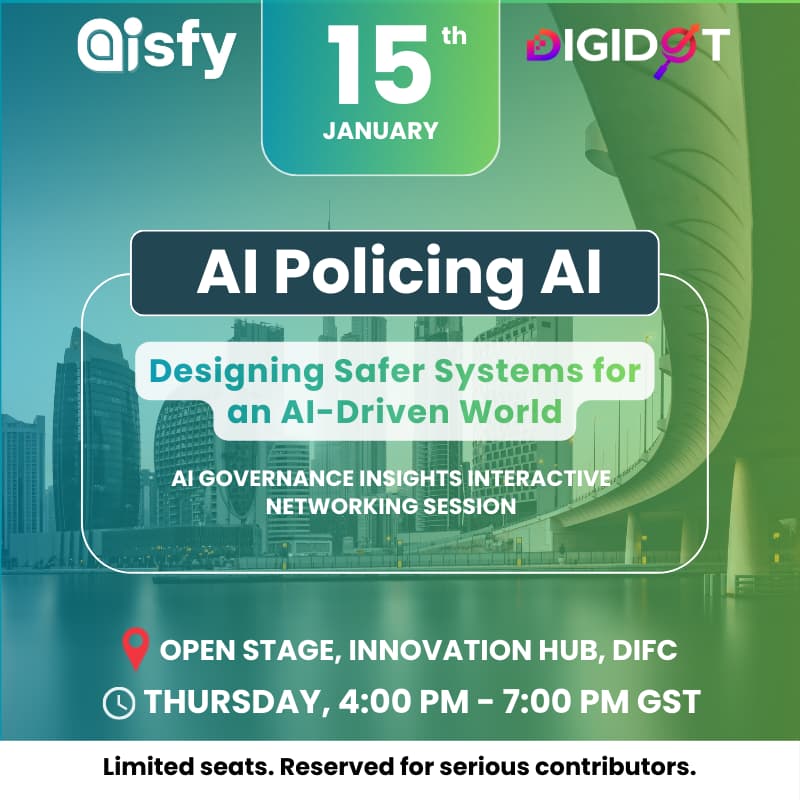

AI Policing AI: Designing Safer Systems for an AI-Driven World

AI Policing AI: Designing Safer Systems for an AI-Driven World

Where AI builders, regulators, and thinkers reshape the future of intelligent systems, one case at a time.

🔍 What It’s About

Each month, we pick one major AI headline, a data breach, bias scandal, lawsuit, model leak, or ethical dilemma, and reverse-engineer it using real governance principles.

From event → cause → consequence → prevention.

We break down:

✅ What went wrong

✅Which ethical, legal, or cultural principles were breached

✅How AI tools should be designed to prevent similar failures

✅What this means for the future of intelligent systems

This isn’t theory.

This is applied governance, real cases, real impact, real solutions.

💡 Why It Matters

AI is crossing the threshold from tool to autonomous actor. The systems we design today will determine the moral direction of machines tomorrow.

This forum helps you:

✅ Stay ahead of AI ethics, compliance, and global standards

✅ Learn from real incidents, failures, and legal cases

✅ Contribute insights to how governance must evolve

✅ Build with responsibility, not an afterthought

Because building AI is no longer enough.

We must govern it, by design.

👥 Who Should Attend

✅ Policymakers & compliance leaders

✅ Developers, engineers, & product teams

✅ Educators, researchers, and ethicists

✅ ESG, AI risk, and data governance teams

✅ Curious citizens shaping the future of AI

🖊️ No technical background required, just a clear mind and ethical compass.

🔹 Event Agenda Overview — AI Policing AI

1️⃣ THE PULSE — Agentic AI & Security

Real-world incidents and why autonomous systems now pose system‑level risk.

2️⃣ THE WHAT — Engineering Agentic AI for Safety & Scale

What AI agents are becoming, and how they’re being built, deployed, and scaled today.

3️⃣ THE WHY — Why AI Now Affects Every Society

The ethical, social, cultural, and human consequences of unchecked AI autonomy.

4️⃣ THE WHO — Who Is Most Affected & Who Is Responsible

Founders, engineers, regulators, institutions — accountability in the agentic era.

5️⃣ THE HOW — How to Engineer Safer Systems

Practical frameworks, lifecycle governance, and containment by design.

6️⃣ THE BUILD — Let’s Co‑Create Safer Systems

Interactive workshop translating insight into real safeguards and action.” In Luma description

Every session ends with:

✅ Key lessons

✅ Design recommendations

✅ Published community notes

📌 What You’ll Walk Away With

✅ Monthly intelligence on AI trends that actually matter

✅ A deeper understanding of how ethics translates into design

✅ Practical frameworks for governance, compliance, and risk

✅ A growing network of serious, values-aligned professionals

🗓️ When & Where

🕕 15th January 2025 / Thursday | 04:00 PM to 07:00 PM GST

✅ 4:00–4:30 PM – Check-in & Networking

✅ 4:30–5:10 PM – Expert Panel Discussion

✅ 5:10–07: 00PM – Interactive Group Simulation, Case Study, Group presentation

📍Open Stage, Innovation Hub, DIFC

🎙️ Hosted by: AI Digidot Ltd (creators of Aisfy)

🅿️ Parking Information – DIFC Innovation Hub

• First hour of parking is complimentary.

• Standard rate: AED 10 per hour.

• Parking redemption: You can redeem 4 hours of parking with a minimum spend of AED 75 at any outlet within Gate Avenue. Redemption is available at the reception.

📍 Parking Location:

Gate Avenue Visitors Parking D

Zaa'beel Second, DIFC, Dubai, United Arab Emirates

Google Maps:

https://maps.app.goo.gl/4Vg9Go3wQMo8JHgW7

🧩 Join Us

If AI is going to change the world, we must decide what kind of world it will become.

Register. Contribute. Shape the future.

✅ Disclaimer

Photos and videos may be taken during the event. By registering, you agree to receive event-related updates. All personal data will be handled responsibly in line with GDPR. You may request correction or deletion at any time.