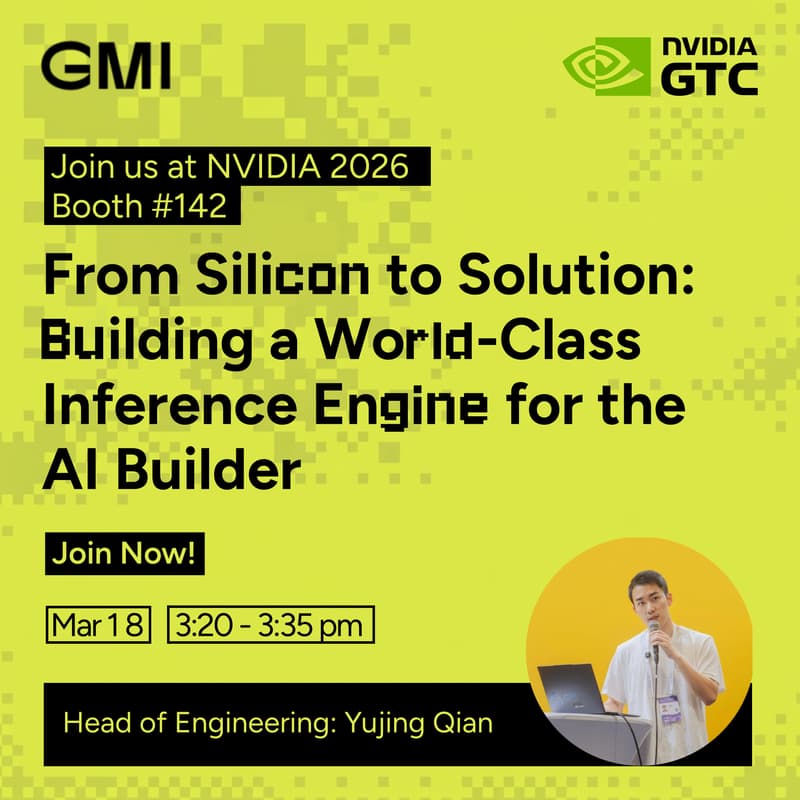

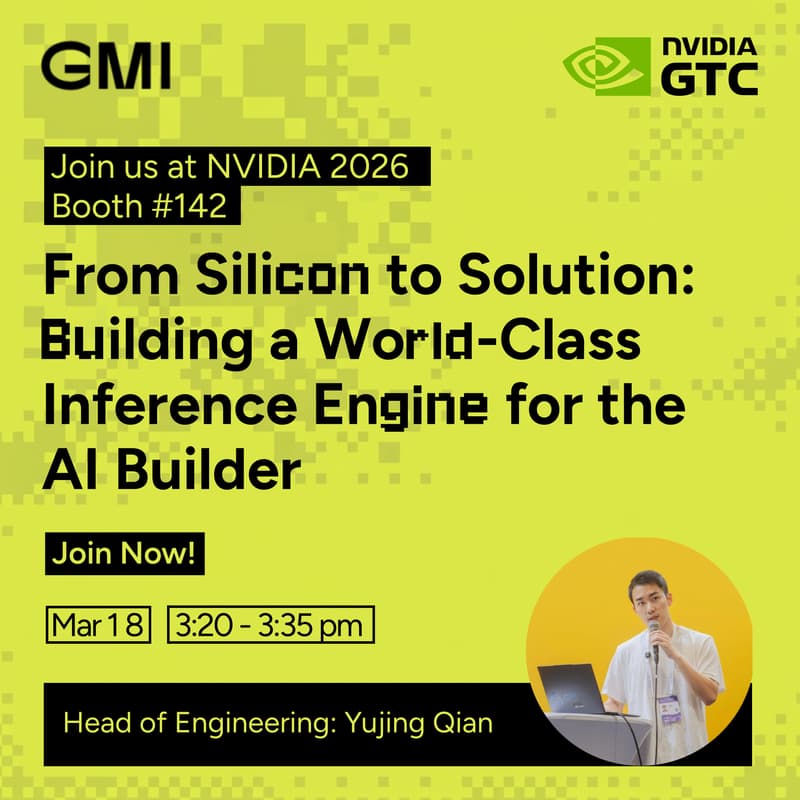

From Silicon to Solution: Building a World-Class Inference Engine for the AI Builder

Join GMI Cloud and Yujing Qian (Head of Engineering) for a deep dive into how modern inference systems are evolving in the era of next-gen GPUs.

As new architectures like Blackwell push the limits of compute, many production systems face unexpected bottlenecks in latency, cost, and scaling. This session explores what actually changes at the system level — and how to rethink your inference stack for real-world workloads.

What You’ll Learn

• Why traditional inference assumptions break on next-gen GPU architectures

• How to redesign batching, scheduling, and concurrency strategies

• Key architectural shifts for better latency and cost efficiency at scale

• What real production traffic reveals about inference system behavior

Why Attend

If you're building or scaling AI inference systems, this session will give you a clearer framework for moving from raw compute power → production performance.

⚡ Join us live at Booth #142.