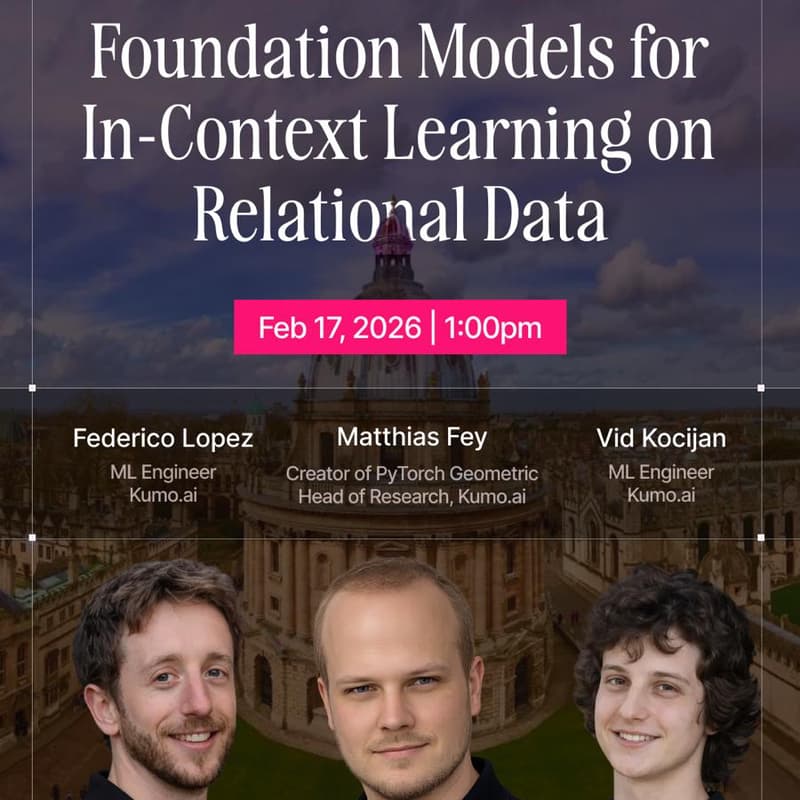

LoG² - Learning on Graphs and Geometry

While foundation models have revolutionized unstructured data, structured enterprise data remains siloed in relational databases, typically requiring labor-intensive, task-specific model development.

In this talk, we introduce the Kumo Relational Foundation Model (KumoRFM), a pre-trained architecture designed to generalize across arbitrary relational schemas and diverse predictive tasks without retraining.

Central to this approach is the paradigm of Relational Deep Learning (RDL), which represents relational databases as temporal heterogeneous graphs where records are nodes and primary-foreign key links define the edges.

KumoRFM employs a table-agnostic encoding scheme and a novel Relational Graph Transformer to reason across arbitrary multi-modal data and complex multi-table structures. This architecture enables in-context learning (ICL) by dynamically generating task-specific context labels from historical snapshots via temporal neighbor sampling, allowing the model to reason about the dependencies between subgraph-label pairs in a single forward pass.

We demonstrate that KumoRFM delivers accurate predictions in under one second and outperforms conventional supervised approaches on the RelBench benchmark, paving the way for scalable and explainable AI on enterprise data.