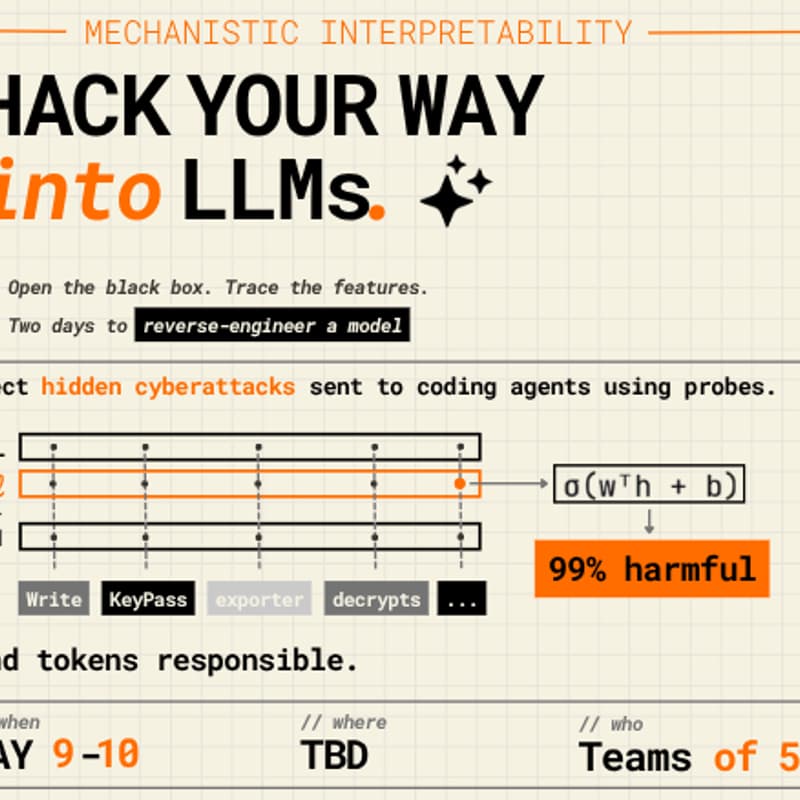

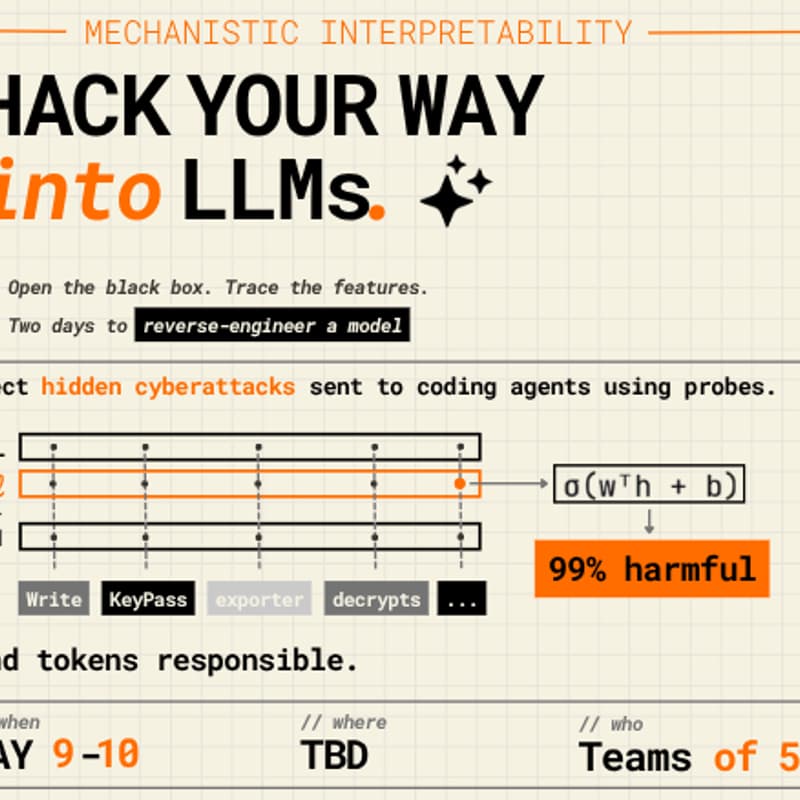

Hack your way into LLMs

Can you read a model's mind before it writes the exploit?

SAIL is hosting a mechanistic interpretability hackathon at EPFL. A curated adversarial dataset. Five coding models. One question: what's actually happening inside when they decide to help an attacker?

Two tracks:

Probes as monitors — classify internal activations to detect harmful compliance before generation lands

Attribution — pinpoint which inputs actually push a model toward an attacker

What you get: Claude Code credits, GPU instances, mentors, and food all weekend. A real shot at a publishable result in 48 hours.

Prizes: CHF 1,000 · CHF 500 · CHF 300

PyTorch fluency + curiosity about model internals. That's the bar.

Register by May 6th

May 9–10 · Teams of 5 · EPFL Hosted by SAIL × MLO Lab.