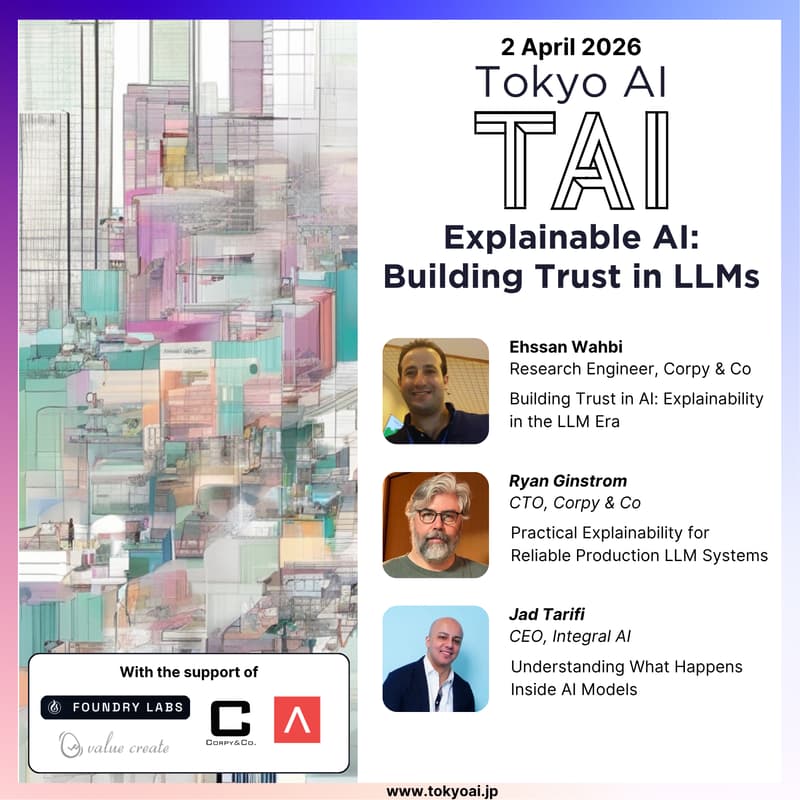

Building Trust in LLMs: Explainability, Interpretability & Production Systems

Join us for an exploration of trust, transparency, and reliability in Large Language Model (LLM) systems.

This event brings together expert perspectives across research and production, covering Explainable AI (XAI), practical strategies for deploying LLMs, and emerging approaches to understanding how these models work internally. Topics include interpretability challenges, RAG traceability and confidence scoring, as well as mechanistic interpretability techniques such as circuit discovery and sparse representations.

Designed for AI practitioners and technical leaders, this session offers actionable insights into building more transparent, reliable, and production-ready AI systems.

Agenda

18:00 Doors open

18:30 - 19:00 Building Trust in AI: Explainability in the LLM Era (Ehssan Wahbi)

19:00 - 19:30 Practical Explainability for Reliable Production LLM Systems (Ryan Ginstrom)

19:30 - 20:00 Understanding What Happens Inside AI Models (Jad Tarifi)

20:00 - 21:00 Networking

21:00 Doors close

Speakers

Talk 1 - Building Trust in AI: Explainability in the LLM Era

Speaker: Ehssan Wahbi

Abstract: Large Language Models (LLMs) have rapidly transformed artificial intelligence, enabling powerful applications across natural language processing, vision, automation, and decision support. However, their increasing scale and complexity make it difficult to understand how these models generate their outputs. This talk explores the role of Explainable Artificial Intelligence (XAI) in improving the transparency and reliability of LLM-based systems. It discusses the limitations of traditional XAI techniques when applied to large-scale generative models and highlights emerging approaches that emphasize human-centered and application-oriented explanations. The presentation also outlines current research direction and practical approaches for improving interpretability, with the goal of supporting the development and responsible deployment of trustworthy AI systems.

Bio: Ehssan Wahbi is a researcher and AI practitioner specializing in machine learning, sign language processing, and explainable AI. He is the author of SATSLP, a Semi-Auto-Regressive Transformer for Sign Language Production. At Corpy, he contributes to the research and development of advanced AI technologies as part of the XAI team, focusing on improving transparency and trust in modern AI systems, including object detection models, graph neural networks (GNNs), and large language models (LLMs).

Talk 2 - Practical Explainability for Reliable Production LLM Systems

Speaker: Ryan Ginstrom

Abstract: As LLMs move into production, their probabilistic nature creates unpredictable failures. This talk offers an engineering roadmap using operational and behavioral layers to build robust LLM systems in production. Attendees will explore techniques like RAG traceability and confidence scoring across tiered modes.

Bio: Ryan is a CTO and seasoned engineering leader specializing in AI engineering, LLMs, and RAG systems. He is a graduate of Stanford University’s Department of Linguistics and holds multiple patents related to NLP and conversational systems. At Corpy, he leads the engineering organization and scalable infrastructure development, focusing on building high-performance production ML systems. He leverages over 20 years of experience across startups and major tech firms to bridge Japanese and global AI innovation.

Talk 3 - Understanding What Happens Inside AI Models

Speaker: Jad Tarifi

Abstract: What are LLMs and other deep learning models actually doing under the hood? I'll cover the main ideas behind mechanistic interpretability, from circuit discovery to sparse autoencoders to dictionary learning, and how they reveal surprisingly clean structure hidden inside dense neural network layers. Then I'll propose a simple architectural change that makes interpretability first-class: factor each dense layer into an overcomplete dictionary and a projection, so the model thinks in sparse, readable features by default.

Bio: Jad Tarifi is an AGI expert and the CEO and co-founder of Integral AI, where he is driving industry-changing breakthroughs in scalable, energy-efficient AGI to bring humanity closer towards superintelligence. With over 20 years of experience, he has dedicated much of his career to pioneering approaches to AGI.

Before founding Integral AI, Jad spent nearly a decade at Google AI, where he founded and led its first generative AI team and spearheaded research on learning from limited data. He holds a Ph.D. in Artificial Intelligence, with a dissertation focusing on brain-inspired and mathematically rigorous theories of intelligence, and an MSc in Quantum Computing. Jad is also the author of the Freedom Series, a four-volume guidebook that explores the future of AI, human potential, and societal transformation in the age of AGI.

Organizers

Ilya Kulyatin is an entrepreneur with work and academic experience in the US, Netherlands, Singapore, UK, and Japan. He holds a BA in Economics, an MA in Finance, and an MSc in Machine Learning. He's a 3x founder, now helping Japan grow the local AI ecosystem through a not-for-profit community, Tokyo AI (TAI), while building an AI-native system integrator and solutions provider, Foundry Labs株式会社.

Supporters

Tokyo AI (TAI) is the largest technical AI community in Japan, with 4,000+ members mainly based in Tokyo (engineers, researchers, investors, product managers, and corporate innovation managers).

Value Create is a management advisory and corporate value design firm offering services such as business consulting, education, corporate communications, and investment support to help companies and individuals unlock their full potential and drive sustainable growth.

Antler is the world's most active early-stage investor — cited as the #1 most active AI investor globally in 2024 by Dealroom — with a community of 12,000+ founders across 27 cities worldwide. Antler Japan is now accepting applications for its 6th Inception Program, beginning May 11, 2026.

Privacy Policy

We will process your email address for the purposes of event-related communications and ongoing newsletter communications. You may unsubscribe from the newsletter at any time. Further details on how we process personal data are available in our Privacy Policy.