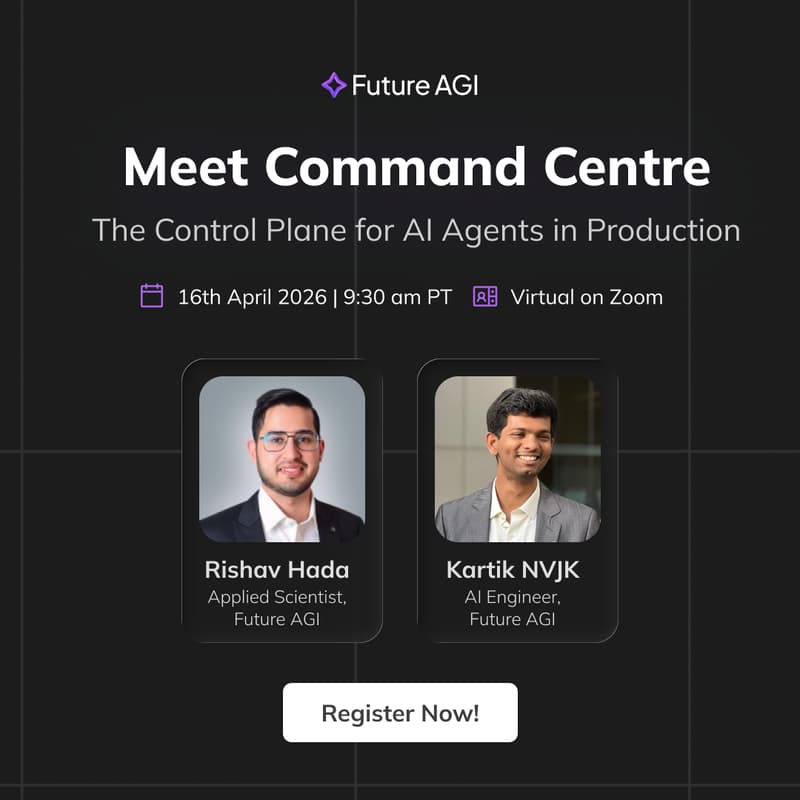

Meet Command Centre: The Control Plane for AI Agents in Production

🎯 Overview

AI teams are routing billions of tokens through centralized gateways that hold the keys to every model, every agent, every provider with little to no runtime governance over them. Recent LLMlite attack exposed just how catastrophic that gap can be. This session is a practical engineer's guide to building a secure, observable, and governed LLM infrastructure before something goes wrong.

Summary

As AI stacks grow more complex: multi-model, multi-agent, multi-provider, the gateway layer has quietly become the single most critical and least governed piece of infrastructure your team owns. One compromised dependency, one misconfigured route, one unbounded cost spike can take down or expose your entire AI layer.

We'll break down the real attack surfaces engineers need to care about today, and walk through what a proper Command Centre for your LLM infrastructure looks like in practice - covering security, routing, observability, cost control, and policy enforcement. No theory. Architecture patterns and live demo you can apply the same week.

🚀 What You'll Learn

Understand why the LLM gateway layer is now the highest-value target in your AI stack and the specific threat vectors most teams are blind to

Learn how to enforce runtime guardrails and content policies across your entire model fleet without touching individual agent or app code

Build intelligent routing with automatic failover so no single provider outage disrupts your production systems

Implement cost tracking and budget controls per team, project, or workload - and stop flying blind on LLM spend

Set up full observability over every LLM call: latency, errors, token usage, and policy violations in one place

Know when and how to self-host your gateway for data residency, compliance, and air-gapped requirements

Who Should Attend

AI/ML engineers, platform and infrastructure engineers, security engineers, and technical leads managing AI systems in production. Also relevant for engineering managers, CTOs, and VPs of Engineering making architectural decisions around multi-model or agentic stacks.

Why This, Why Now

The tooling in this space moved faster than the security and governance thinking around it. Most teams are one bad dependency, one unpinned package, or one missing policy away from a serious incident. This session closes that gap — live demo, real architecture, no fluff. Recording available to registered attendees only.

→ Free. Limited seats.

About Future AGI

Future AGI is a San Francisco-based advanced agent engineering & optimization platform that helps you ship reliable and self-improving AI. Its core workflow follows a Simulate → Evaluate → Optimize → Protect pipeline: it simulates real-world scenarios by generating diverse synthetic datasets including edge cases to rigorously test AI agents before deployment. It then evaluates agents across multiple modalities (text, image, audio), pinpointing errors with precision. The platform optimizes performance by automatically refining prompts, comparing agentic workflow configurations, and incorporating evaluation feedback to close the improvement loop. Finally, it protects applications in production through real-time observability, diagnostics, and built-in safety metrics that block unsafe content with minimal latency.

🌐 Follow us on LinkedIn to get the latest updates on events and new launches.