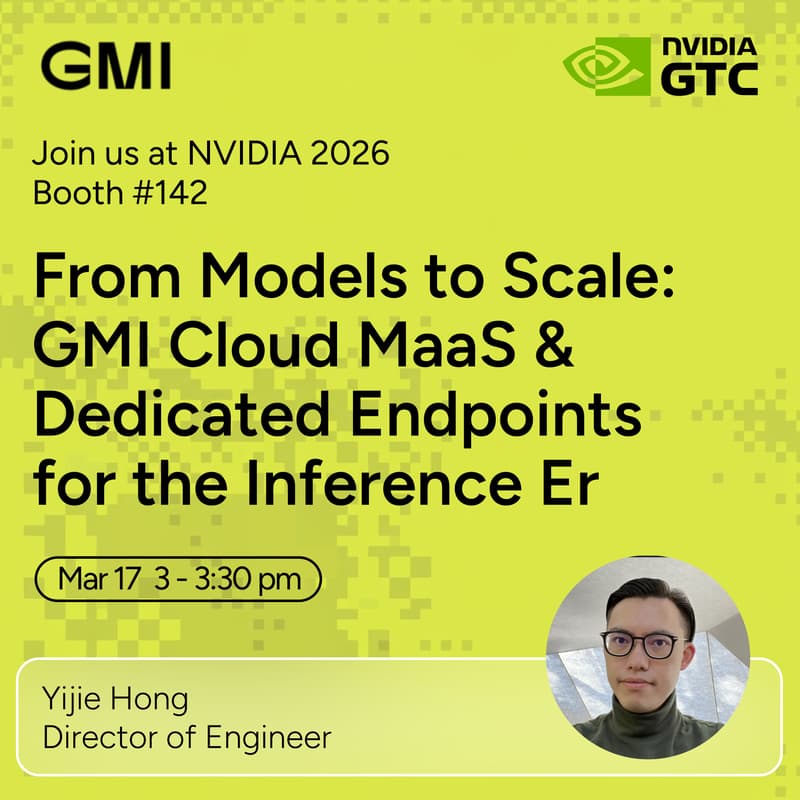

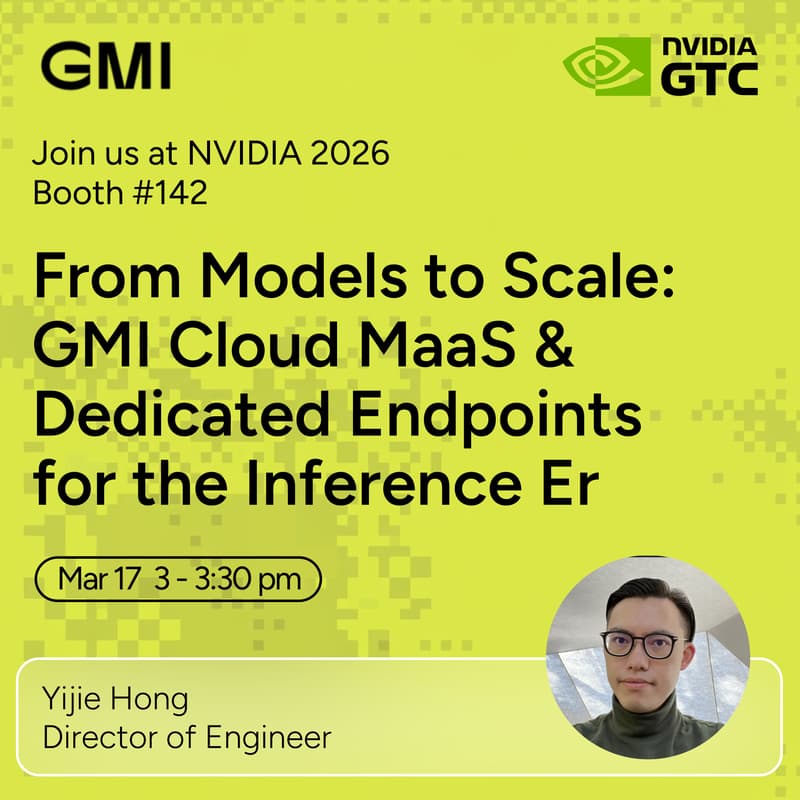

From Models to Scale: GMI Cloud MaaS & Dedicated Endpoints for the Inference Era

Join GMI Cloud for a live demo on how teams move from model access → production-scale inference.

As AI adoption accelerates, the challenge isn’t just accessing models — it’s deploying and scaling them reliably. In this session, we’ll show how GMI enables both:

→ Model-as-a-Service (API-first model access)

→ Dedicated Endpoints (high-performance inference at scale)

If you're building LLM apps, AI agents, or multimodal products, this demo will show how to simplify your inference layer.

What You’ll See

Model-as-a-Service (MaaS): API-First Model Access

• Access models across LLM, image, video, and audio

• Integrate via simple APIs — no GPU setup

• Automatically scale with built-in orchestration

• Ship AI features faster

Dedicated Endpoints: Production-Grade Inference

• Deploy custom or fine-tuned models

• Use dedicated GPU resources for consistent performance

• Achieve low latency + high throughput

• Run reliable, high-traffic AI workloads

Why Attend

• See AI inference workflows in action

• Learn how teams deploy and scale faster

• Explore API + dedicated compute infrastructure

• Meet the GMI Cloud team at GTC

⚡ See you at Booth 142!