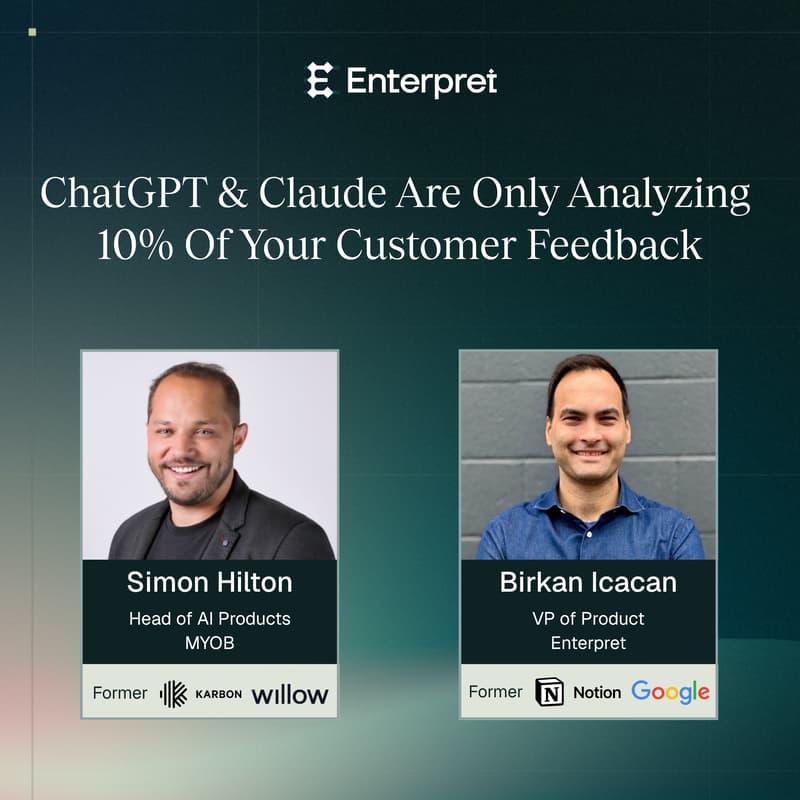

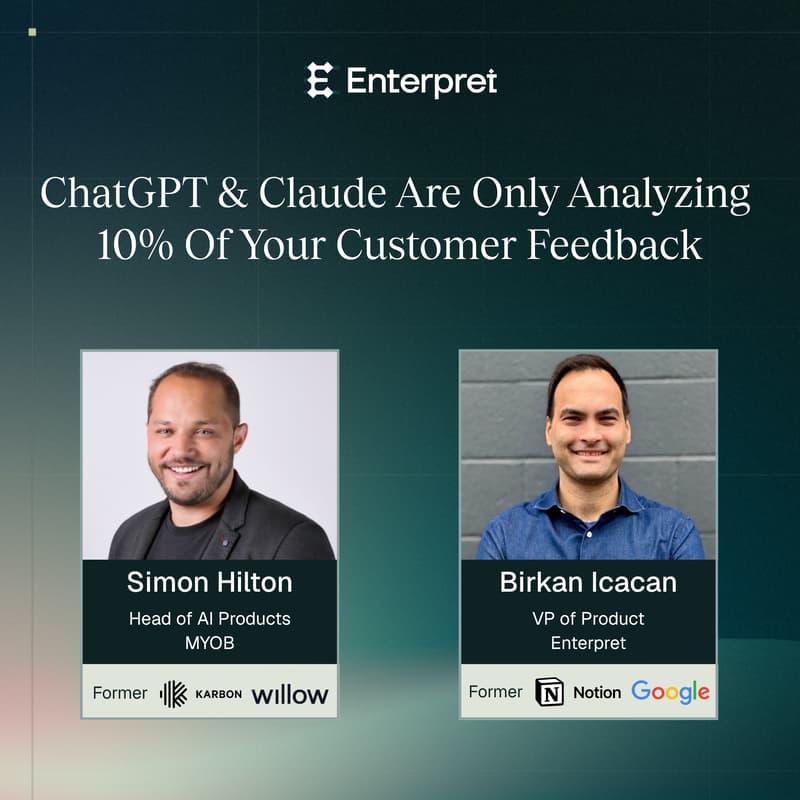

ChatGPT and Claude Are Only Analyzing 10% of Your Customer Feedback

You asked AI what your customers want. The answer looked right. Was it?

Most teams start here: paste in tickets, run a prompt, get a confident summary. It feels like progress and for a while, it is. The cracks show up later: in churn, in missed targets, in a roadmap that drifted without anyone noticing. The feedback was always there. It just wasn't being read correctly.

The problem isn't that you used AI. It's that the tools have limitations that don't announce themselves.

Simon Hilton (Head of AI Products at MYOB, author of The Product Analytics Compass) and Birkan Icacan (VP of Product, formerly of Google and Notion) have both lived this transition. In this session they get specific about where ad hoc AI analysis quietly breaks down and what fills the gap.

Most of your feedback never influences the answer: LLMs silently drop data when context gets large. Enterpret's analysis shows ~10% of feedback actually shapes the output.

Your answers change because nothing is anchored: Without consistent classification, the same question asked twice returns different results. No foundation to trend or compare against.

You can't prioritize by value if value isn't in the data: Feedback text doesn't know which accounts are at risk or which problems belong to your top ARR. That layer has to be connected.

Confident prose isn't the same as complete analysis: The output always sounds thorough. That's what makes the gaps hard to spot.

If you're in product or CX and using AI for feedback today, you'll leave with a clear diagnosis of where your current approach breaks down and a concrete framework for what to do instead.