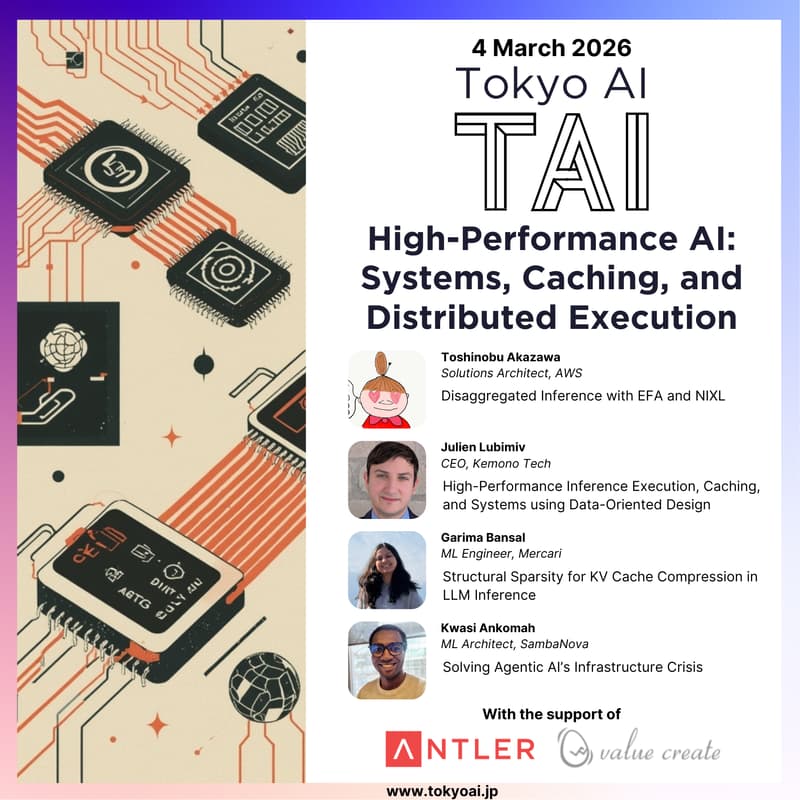

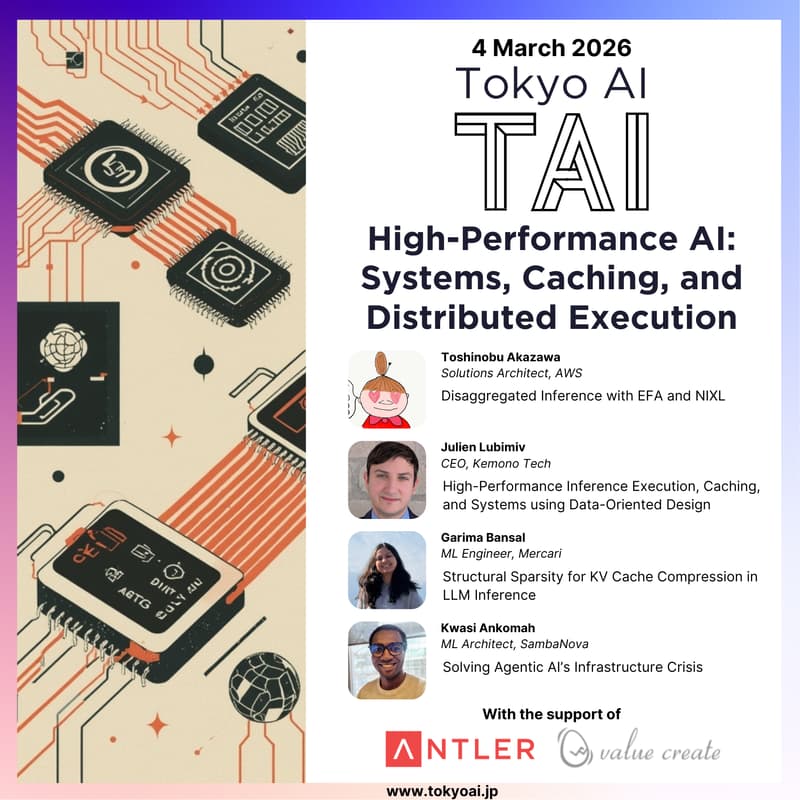

High-Performance AI Inference: Systems, Caching, and Distributed Execution

As large language models move into production, inference performance is increasingly defined by systems-level decisions, not model architecture or prompts.

This session explores the infrastructure and low-level engineering challenges behind efficient LLM inference, including KV cache movement, memory bandwidth, cache efficiency, distributed execution, and long-context optimization.

Across three technical talks, we’ll cover disaggregated inference on modern cloud hardware, data-oriented design for high-performance inference engines, and structural sparsity techniques for KV cache compression.

The event is designed for engineers and researchers working on LLM infrastructure, inference engines, and ML systems, and concludes with networking, food, and drinks.

Agenda

18:00 Doors open

18:30 - 18:50 Disaggregated Inference with EFA and NIXL (Toshinobu Akazawa - Solutions Architect, AWS)

18:50 - 19:10 High-Performance Inference Execution, Caching, and Systems using Data-Oriented Design (Julien Lubimiv - CEO, Kemono Tech)

19:10 - 19:30 Structural Sparsity for KV Cache Compression in LLM Inference (Garima Bansal - ML Engineer, Mercari)

19:30 - 19:50 Solving Agentic AI’s Infrastructure Crisis (Kwasi Ankomah - ML Architect, SambaNova)

19:50 - 20:00 Buffer

20:00 - 21:00 Networking & Food & Drinks

21:00 Doors close

Speakers

Talk 1 - Disaggregated Inference with EFA and NIXL

Speaker: Toshinobu Akazawa (Solutions Architect, AWS)

Abstract: In disaggregated inference for large language models, KV cache transfer between instances often becomes a performance bottleneck. This session will organize insights from my research on NIXL's EFA support. NIXL supports AWS Neuron devices through the libfabric backend, and I will cover the current state of disaggregated inference on AWS, along with vLLM's NIXL integration architecture.

Bio: Worked as an LSI designer for the Tofu interconnect of the supercomputer "Fugaku" at Fujitsu. Subsequently served as a backend engineer at DeNA and a software engineer at PKSHA. Since 2020, has worked at AWS as a Solutions Architect and SA People Manager, currently providing technical support focused on HPC/ML infrastructure for SaaS customers.

Talk 2 - High-Performance Inference Execution, Caching, and Systems using Data-Oriented Design

Speaker: Julien Lubimiv (CEO, Kemono Tech)

Abstract: In the era of generative AI models, our inference engines and frameworks are bottlenecked not by compute, but by memory bandwidth and cache efficiency. Data-oriented design is a popular solution in game engines for solving these problems in a clean and efficient manner. In this talk, we will explore low-level data-oriented design and its advantages for caching, updating, and distributing data for hardware and networks.

Bio: Low-Level Engineer with prior experience with CANSOFCOM operational systems and Stability AI as an AI engineer. Now a CEO of a new startup, KemonoTech, with a focus on low-level machine learning frameworks, networking, and implementation with cofounder, Alex Goodwin, author of SwarmUI. Currently working on my product, AniStudio, a ggml-based framework and engine entirely in C/C++.

Talk 3 - Structural Sparsity for KV Cache Compression in LLM Inference

Speaker: Garima Bansal (ML Engineer, Mercari)

Abstract: As large language models move into real-time and resource-constrained environments, efficient inference has become a critical systems challenge. While KV caching reduces computational complexity by storing intermediate attention states, the linear growth of the KV cache remains a critical memory bottleneck for long-context tasks like chain-of-thought reasoning, code generation, and document-level QA. This talk explores the current landscape of KV cache compression methods and introduces my research on leveraging structural sparsity to preserve connectivity patterns within KV matrices while substantially reducing memory footprint.

Bio: Machine Learning Engineer at Mercari, currently focused on optimizing user growth and marketing campaigns through data science. Additionally involved with the startup Awsmo, exploring time series forecasting to reduce cloud infrastructure costs by predicting traffic patterns. A 2025 graduate of IIT Kharagpur (India), where her Bachelor’s thesis research focused on improving the memory-performance tradeoff for large-scale LLM inference and training.

Talk 4 - Solving Agentic AI’s Infrastructure Crisis

Speaker: Kwasi Ankomah (ML Architect, SambaNova)

Abstract: The next frontier of AI isn't just about bigger models—it's about agents that can think, plan, and act autonomously. But behind every intelligent agent lies an infrastructure crisis: traditional hardware wasn't built for the unpredictable, multi-step workloads of agentic AI. This presentation discusses the SN50™, SambaNova's answer to this challenge. By combining a purpose-built dataflow architecture, innovative agentic caching for ultra-fast model switching, and cloud-scale deployment supporting up to 256 accelerators and 10 trillion parameter models, we're enabling enterprises to deploy agentic inference that's not just faster, but also finally cost-effective.

Bio: Kwasi Ankomah is a Lead AI Architect at SambaNova Systems, where he leads solution efforts on generative AI, large language models, and agentic AI applications. With a background spanning the UK’s Financial Conduct Authority and the consulting and financial sector, he brings deep expertise in applying AI to complex, regulated domains. He holds an MS in Data Science. Kwasi is passionate about AI leadership, diversity in tech, and responsible AI development. He’s a recognized voice in the AI infrastructure space, having appeared on podcasts like “The Neuron” and spoken at events including the AI Summit London, where he discusses why inference speed is the hidden bottleneck in scaling AI agents.

Organizers

Ilya Kulyatin: Fintech and AI entrepreneur with work and academic experience in the US, Netherlands, Singapore, UK, and Japan, with an MSc in Machine Learning from UCL.

Supporters

Tokyo AI (TAI) is the biggest AI community in Japan, with 4,000+ members mainly based in Tokyo (engineers, researchers, investors, product managers, and corporate innovation managers).

Value Create is a management advisory and corporate value design firm offering services such as business consulting, education, corporate communications, and investment support to help companies and individuals unlock their full potential and drive sustainable growth.

Privacy Policy

We will process your email address for the purposes of event-related communications and ongoing newsletter communications. You may unsubscribe from the newsletter at any time. Further details on how we process personal data are available in our Privacy Policy.