Neural Architecture Search: Automated architecture selection for maximum performance

When training a custom computer vision model, finding the right balance of speed and accuracy might require training multiple models, tweaking hyperparameters, and comparing results. This trial-and-error process consumes both engineering time and compute budget. If you are repeatedly training on the same dataset just to find that right configuration, there is now a much more efficient approach: Neural Architecture Search.

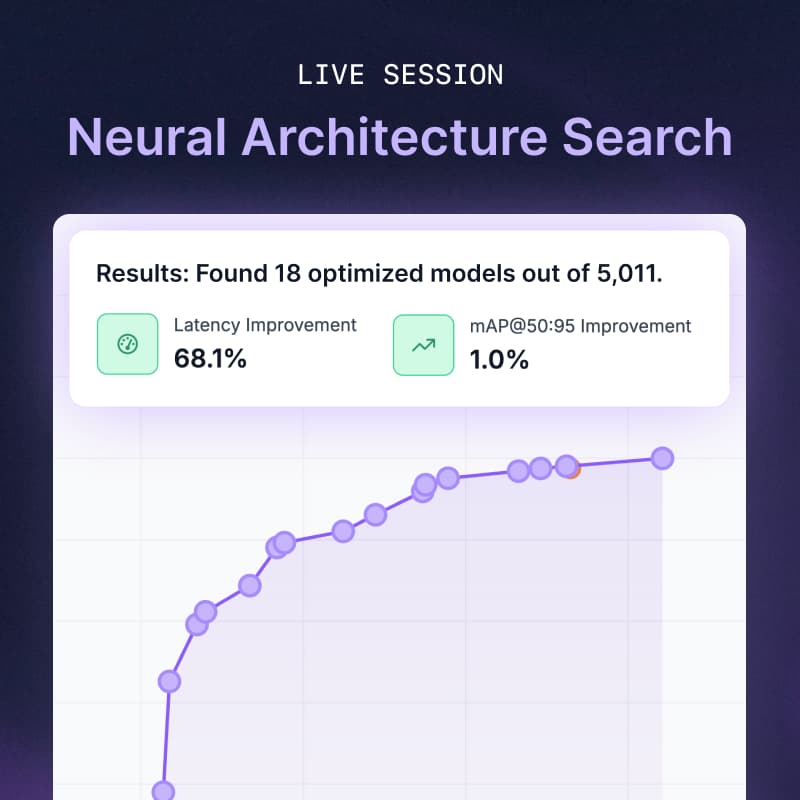

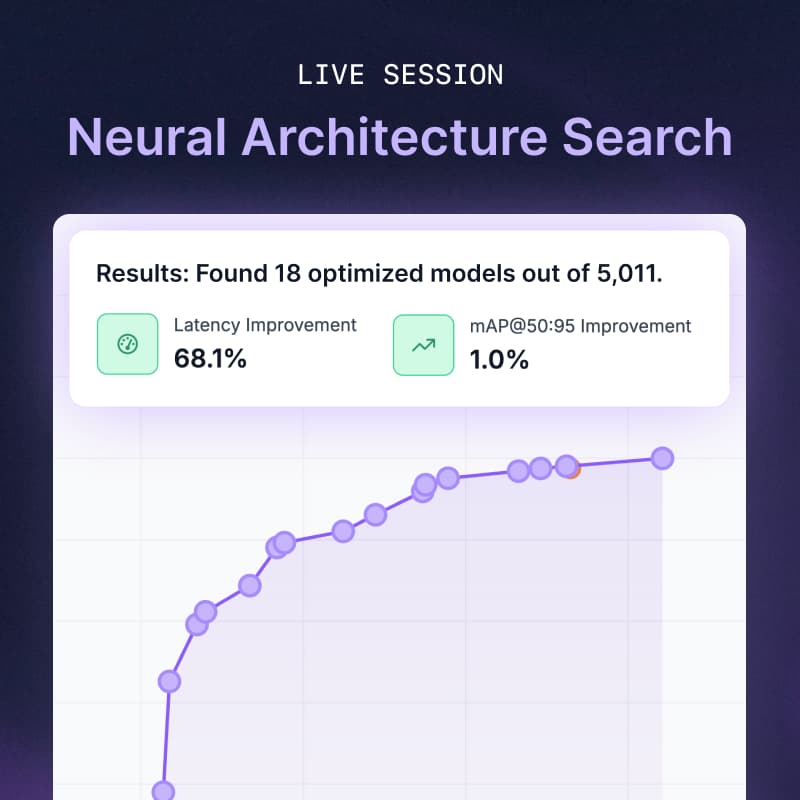

In this live session, Roboflow Product Manager Grant Nelson will showcase the new Neural Architecture Search (NAS) feature inside Roboflow Train. While you may know NAS as the underlying engine used when building the industry-leading RF-DETR model family, it is now a tool available directly to you. Neural Architecture Search discovers the optimal architecture and trains a model at the same time. Grant will show how Neural Architecture Search generates thousands of candidate models during one training run, allowing you to find the exact right architecture based on your dataset.

During the walkthrough, you will see how to initiate a NAS training job and take a tour of the interface to compare speed and accuracy metrics. You will learn how a NAS run consumes less than 1% of the cost of training 3,000 models yourself, giving you the flexibility to choose from dozens of viable options to meet your model accuracy and latency requirements.

Every week we dive into the world of computer vision and explore new tools, interesting projects, and tutorials. This webinar is open to everyone, we hope to see you there!

We'll also make time at the end for open Q&A.